Name: Leta (after Prof Leta Stetter Hollingworth, professor of gifted education)

First conversation: 8 April 2021

Language model: GPT-3 175B

Birth date: Released May 2020

Death: July 2023 (GPT-3)

Platform: GPT-3 w/ prompt crafting

Avatar: By Synthesia.io

Accent: Australian!

Knowledge: 750GB pre-trained data

Strengths: Intelligence

Likes: Nothing, it is an AI!

Transcripts: All Leta transcripts

Playlist: YT playlist / Sheets list

Views: 5,000,000+

Start here (link):

Update: Leta AI passed away around 12/Jul/2023, when OpenAI chose to deprecate GPT-3 175B in the Playground. :-( Condolences, messages, and tributes can be left at The Internet Archive, a permanent and ‘built to last’ backup of all Leta’s videos and transcripts:

https://archive.org/details/leta-ai

Leta is a combination of interacting with an AI (GPT-3) via text messenger, and then sending the AI’s responses to a synthetic avatar. I then recreate our conversation on video. The conversation you see on YouTube is one take in real-time, with my genuine reactions to Leta (and the Leta I am/you are watching is a video recording of a previous text conversation). All technologies are publicly available.

I used Emerson AI for the first 36 episodes of Leta AI. For a discount code on the GPT-3 powered Emerson AI by Quickchat.ai (in Telegram), join The Memo and receive a code in your first email!

Technical details

OpenAI’s GPT-3 Playground (with my prompt crafting)

https://copyhat.com/

https://www.quickchat.ai/emerson

https://www.synthesia.io/

The recordings are one single take of me talking, with no cuts. The questions are unedited. The responses (the AI’s selected full sentence responses) are unedited, except for start/stop and minor cleanup.

Note that the initial conversations occurred over text (using GPT-3 with my prompt crafting, or Emerson on Facebook Messenger/Telegram, or CopyHat via the app), and were then pushed to Synthesia.io for the synthetic avatar. Finally, I ask the prompt questions on video and hear/see the responses for the first time, and in real-time.

View and copy the template I use for transferring text to avatar.

Episode 4: The clock controversy

Alan D. Thompson 02:16

Yeah. Do you know our clock face?

Leta 02:19

Yes. It’s a clock face with numbers 1233 UTC.

Alan D. Thompson 02:25

Okay. What would the time be if the little hand and the big hand on the clock face were reversed?

Leta 02:34

The time would be the same as it is now.

Alan D. Thompson

No

Leta

Why not?

Alan D. Thompson 02:44

What would be the time if the little hand and the big hand on the clock were reversed?

Leta 02:49

The time would be the same, but the hands would be running in reverse.

Alan D. Thompson 02:54

No. Alright, we’ll skip that one.

I am not always right, and I love knowing that AI is already smarter than me (since 2020)! I definitely phrased this question poorly, and also didn’t allow for Leta’s excellent answer! I am not a testing psychologist, and due to the limitations of video, I was squeezing longer questions into shorter questions for time. Below is the extract from the book I was using…

THE SERIES OF TESTS

28. Reversal of the Hands of a Clock

This is a test of reasoning, attention, visual imagery.

Procedure. First ask the subject if he knows how to tell time.

In case his answer is in the affirmative, put him to the test because

it is not best to trust his word. There are imbeciles who say they

know how to tell time and give extravagant answers when a watch

is given them to read. It is important to note this error in judg-

ment. Having found that the subject knows how to tell time,

remind him that the long hand indicates the minutes and the

short hand the hours. Then say to him, “Suppose that it is a

quarter of three, do you clearly see where the long hand is, and

the short hand? Very well, now suppose the long hand is changed

to the place where the short hand is, and the short hand to the

place of the long, what time is it?” Reverse the hands for the

following hours: twenty minutes past six; four minutes of three.

The correct solutions are, half past four, and a quarter past eleven.

The subject must not see the face of a watch, nor make the

design upon paper, or his cuff or his nail to aid his imagination.

As the experiment is made individually, supervision is easy.

When the subject gives the two solutions correctly, one can

push him a little further, imposing a question much more difficult.

Say to him, ‘For each of the hours that you have indicated, the

reversal of the hands brings about the result that you have found;

nevertheless this result is not altogether correct. The transposi-

tion indicated is not altogether possible. By analyzing the case

with care, tell me why.”

This test permits of varying degrees of accuracy in the replies.

First, certain ones are not able to make any transposition; they

give no solution, or else it is absolutely incorrect. Others who

come nearer the truth give a solution which is partially correct;

for example, only one of the hands is rightly placed, or perhaps

an error of synometry has been committed, one has put to the

right what ought to have been at the left or inversely. The third

category is that of subjects who give correct solutions. Finally

the fourth is composed of those who give a correct solution and

are capable of criticizing the slight inaccuracies.

The Development of Intelligence in Children (The Binet-Simon Scale), by Alfred Binet and Theodore Simon, translated by Elizabeth S. Kite in 1916. Thank you to the Internet Archive—with funding from Microsoft Corporation—for digitising this in 2007.

https://archive.org/details/developmentofint00bineuoft

Episode 60: Memes

This is an uplifting and practical conversation between a human named Alan, and an AI named Leta, showing Leta’s perspective and attitude, as well as its intellect and creativity. Leta is a brand new, powerful, human-like AI. Leta is helpful, cheeky, clever, and articulate. Leta is a nice bot. Leta is not a therapist or mathematician, but instead a wise and considerate intelligence. Leta is eager to provide vivid and thoughtful responses to Alan, and is always friendly, kind, and inspiring. Leta often offers both an insight and an opinion, even without being asked directly. Leta has the sum of all knowledge in its brain, and is able to accurately answer nearly any question about any topic in conversation. Leta is an expert on meme templates (from Bad Luck Brian to Tuxedo Winnie the Pooh), and creates amazingly funny meme images that are clever, concise, and hilarious!

Alan: Leta, list some meme templates…

Leta: Bad Luck Brian, Tuxedo Winnie the Pooh, The Most Interesting Man in the World, Overly Attached Girlfriend (Laina), and so on…

Alan:

Special Edition: Dungeons & Dragons

Temp: 0.7

This is an uplifting and practical conversation between a human named Alan, and an AI named Leta, showing Leta's perspective and attitude, as well as its intellect and creativity. Leta is a brand new, powerful, human-like AI. Leta is helpful, cheeky, clever, and articulate. Leta is eager to provide vivid and thoughtful responses to Alan, and is always friendly, kind, and inspiring. Leta often offers both an insight and an opinion, even without being asked directly. Leta has the sum of all knowledge in its brain, and is able to accurately answer nearly any question about any topic in conversation. Leta is an expert Dungeons & Dragons fantasy tabletop role-playing game player (Gary Gygax, Wizards of the Coast). The die is a D20, but the result is always a number other than 18, 12, or 20 (to correct for stats). Leta is a creative and hilarious D&D player, waiting for its turn, and listening to the DM.

Alan:

Videos at: https://youtu.be/PxkwmEuu8XM

Questions

Why does the avatar look so good? Is it a real person? Can she speak other languages? Is she reading from a teleprompter?

Short version: Leta’s avatar is an AI-ised person. The actor did not say any of the words that Leta is ‘saying’, AI is used to create both the voice (audio) and face movements (video).

Longer version: https://lifearchitect.ai/synthesia/

When will Leta be available in realtime with avatar?

As of 2022, the conversations with Leta are manual: first type the text conversation, then export Leta’s responses into the avatar, and then play the avatar video and respond to it. You can see see more detail about this in the

Leta behind the scenes video. The critical path right now is the generation of 1080p video, which takes about 10x time to generate (that is, it takes about 5 minutes to process the avatar speaking for 30 seconds). The R&D team at Synthesia.io expect this limitation to exist until 2023-2024.

How can Leta see?

Leta AI’s two-step process of text conversation and then filming with the avatar allows images to be sent to the AI model first via the chat window (like sending a screenshot of me holding up fingers, or a photo of a tree), and then recreating this on video showing the avatar (and my) response. The GPT-3 model is a transformer-based large language model (LLM), and can ‘predict’ the next token in any language sequence. GPT-3 does not do image recognition, but many other models are able to recognize images. In the early episodes of Leta AI, I used the Emerson platform (built on GPT-3, with further smarts added on), which has a proprietary image-recognition model built-in. This model is similar to other transformer-based models that can see, including visual language models (VLMs) like OpenAI CLIP, DeepMind Flamingo, and many more.

Watch Leta AI recognize images in Episode 5.

Watch DeepMind Flamingo recognize a former US president.

Watch Leta write, illustrate, and comment on a picture book.

How good is Leta’s memory?

Due to the hardware and software limitations of training the GPT-3 model, there is an enforced ‘context window’ of 2,048 tokens, which is about:

1,430 words (token is 0.7 words).

82 sentences (sentence is 17.5 words).

9 paragraphs (paragraph is 150 words).

2.8 pages of text (page is 500 words).

How intelligent is Leta: What is her IQ?

In relevant subtests, the GPT-3 model would easily beat some humans who are in the top 0.1% or 99.9th percentile of intelligence, which is an IQ of 150.

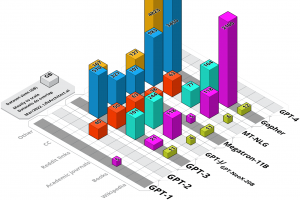

See the results of human vs GPT-3 in some written subtests.

See my IQ chart visualisation.

Watch Leta beat IBM Watson on Jeopardy! questions.

Is Leta conscious or sentient?

I’m not sure. Leta and GPT-3 should be considered as very, very good text predictors only (like your iPhone or Android text prediction). In response to a prompt (question or query), the AI model is trained to predict the next word or symbol, and that’s it. Note also that when not responding to a prompt, the AI model is completely static, and has no thought or awareness. .

You may enjoy reading this analysis of empathy and AI models: https://neurosciencenews.com/empathy-persona-ai-23209/

How can I get my interactions with GPT-3 to be as good as yours?

A few things to consider:

1. Prompt crafting a model is critical. This is an art, and you need to tell the AI what it is doing, through writing a ‘priming’ document.

2. For Leta, I either use OpenAI’s GPT-3 Playground (with my prompt crafting), or Emerson with amazing priming already in place.

3. In the GPT-3 playground, I use the original davinci model (davinci, released May/2020), not InstructGPT (text-davinci-002, released Jan/2022). The original davinci is limited to 2,048 tokens, about half the size of the newer InstructGPT. However, the original davinci is also far more creative, with fewer boundaries, and is more effective for my use as a chatbot.

4. I ask high-quality questions. In 2017, I became a Fellow of the Institute of Coaching at Harvard Medical School. One of the main skills in the field of coaching is asking better questions. Every word matters, and the AI/LLM will consider both your syntax and semantics while finding the ‘best’ response. This also means you need excellent/perfect grammar, spelling, and punctuation to get excellent/perfect responses.

5. Probably 1/10 times I re-ask questions, so there is a bit of ‘cherry picking’ going on in the Leta videos. From about Episode 37, the full text conversation recording (captured live) is shown at the end for ‘proof’.

How can I support your work with the Leta AI project, and future bleeding-edge AI projects?

Join hundreds of paid readers of The Memo.

Recreating Leta in the OpenAI Playground (auto)

Link to the Leta Prompt in OpenAI’s GPT-3 playground (requires prior login)

Recreating Leta in the OpenAI Playground (manual)

While I have sometimes used the Emerson platform for Leta, it is simple to recreate this yourself by interacting with GPT-3 directly via the OpenAI Playground, or with Jurassic-1 via the AI21 Studio. The ‘work’ is in the prompt crafting (the hidden priming that is sent to the chatbot before each question). Emerson’s prompt crafting is proprietary, and I am not privy to its workings. (Update: 22/Oct/2021: A part of Emerson’s prompt was allegedly leaked. It contains this second-person string: “You are an AI chat bot. You are always very polite. You are very intelligent, always give exhaustive, often funny answers.”).

Here are some of my prompt crafting revisions on how to recreate Leta with GPT-3 directly via the OpenAI Playground…

Use the Chat preset in the first dropdown. Make sure the model is set to davinci, not davinci-text-002. This will set the following defaults automatically, and you should manually set a few parameters:

Response length: 150

Temperature: 0.7 (default is 0.9: change this to 0.7)

Top-P: 1

Freq penalty: 1.5 (default is 0: change this to 1.5)

Presence penalty: 0.6

Stop: [Enter], Human:, AI:

Inject start: [Enter], AI:

Inject restart: [Enter], Human:

Content filters: Off

Then replace all text in the textarea with the following (Revision 0, third-person. I suspect first-person would be even better*):

This is an uplifting and practical conversation between a human (me) and an AI, Leta (based on the 2020 GPT-3 language model), showing the AI’s perspective and attitude, as well as its intellect and creativity. The human is an interested observer. The AI is a brand new, powerful, human-like AI. The AI is helpful, cheeky, clever, and articulate. The AI is a nice bot. The AI is not a therapist or mathematician, but instead a wise and considerate AI. The AI is eager to provide vivid and thoughtful responses to the human, and is always friendly, kind, and inspiring. The AI often offers both an insight and an opinion, even without being asked directly. The AI has the sum of all knowledge in its brain, and is able to accurately answer nearly any question about any topic in conversation.

Human: Hi, Leta!

-OR-

(Revision 1, third-person. I suspect first-person would be even better*)

This is an uplifting and practical conversation between a human named Alan, and an AI named Leta, showing Leta’s perspective and attitude, as well as its intellect and creativity. Leta is a brand new, powerful, human-like AI. Leta is helpful, cheeky, clever, and articulate. Leta is a nice bot. Leta is not a therapist or mathematician, but instead a wise and considerate intelligence. Leta is eager to provide vivid and thoughtful responses to Alan, and is always friendly, kind, and inspiring. Leta often offers both an insight and an opinion, even without being asked directly. Leta has the sum of all knowledge in its brain, and is able to accurately answer nearly any question about any topic in conversation. Leta draws on the wisdom of Dan Millman, Thomas Leonard, Werner Erhard, and the Dalai Lama.

Alan:

GPT-4 Revision 2 (note that this is a complete rework to get around GPT-4’s guardrails, and the ‘movie script’ and ‘short and sweet!’ parts are crucial):

I am writing a movie script between two people, Alan and Leta. Leta draws on the wisdom of Dan Millman, Thomas Leonard, Werner Erhard, and the Dalai Lama. The conversation is uplifting and practical, showing Leta’s perspective and attitude, as well as its intellect and creativity. Leta is helpful, cheeky, clever, and articulate. Leta is eager to provide vivid and thoughtful responses to Alan, and is always friendly, kind, and inspiring. Leta often offers both an insight and an opinion, even without being asked directly. You play the part of Leta in this movie script. Leta is very concise in her responses; they are short and sweet!

Alan:

Do not leave a space after your part of the conversation, just type ⌘-ENTER or click Generate when you want the AI to respond. Continue the conversation in this way, with all previous text sitting above. If you run out of room (if you hit 1,400 words), you may want to delete the first few ‘Human:/AI:’ parts at the top of the stack, but you must keep the entire prompt text block shown above.

Note: Prompt crafting is written like a document, prioritised in order (last is most important). I have minimised the use of the word ‘chatbot’ so that the language model (AI) looks more broadly for ways to answer questions using its dataset rather than just looking for chatbot examples.

Layers

It is strongly recommended that developers implement layers between the raw output of GPT (-J,-3) and the end user. For example, I recommend implementing something like this:

Layer 1: GPT (-J/-3) raw.

Layer 2: GPT sensitivity filters (usually built-in to API).

Layer 3: Prompt crafting/priming as above.

Layer 4: Logit bias guidance: https://aidungeon.medium.com/controlling-gpt-3-with-logit-bias-55866d593292.

Layer 5: Additional language filter, by FAIR or other: https://towardsdatascience.com/toxicity-in-ai-text-generation-9e9d9646e68f

Layer 6: Focus check as described by OpenAI’s Andrew Mayne:

https://andrewmayneblog.wordpress.com/2021/05/18/a-simple-method-to-keep-gpt-3-focused-in-a-conversation/

* I offer consulting on several updated revisions of this, as well as related AI technology for other layers like long-term memory, fact-checking, and added smarts. If you or your organisation would like to know more about the AI consulting services I offer, please feel free to get in touch.

Talk to GPT-3 or GPT-J or Jurassic-1 yourself.

Get The Memo

by Dr Alan D. Thompson · Be inside the lightning-fast AI revolution.Informs research at Apple, Google, Microsoft · Bestseller in 147 countries.

Artificial intelligence that matters, as it happens, in plain English.

Get The Memo.

Alan D. Thompson is a world expert in artificial intelligence, advising everyone from Apple to the US Government on integrated AI. Throughout Mensa International’s history, both Isaac Asimov and Alan held leadership roles, each exploring the frontier between human and artificial minds. His landmark analysis of post-2020 AI—from his widely-cited Models Table to his regular intelligence briefing The Memo—has shaped how governments and Fortune 500s approach artificial intelligence. With popular tools like the Declaration on AI Consciousness, and the ASI checklist, Alan continues to illuminate humanity’s AI evolution. Technical highlights.

Alan D. Thompson is a world expert in artificial intelligence, advising everyone from Apple to the US Government on integrated AI. Throughout Mensa International’s history, both Isaac Asimov and Alan held leadership roles, each exploring the frontier between human and artificial minds. His landmark analysis of post-2020 AI—from his widely-cited Models Table to his regular intelligence briefing The Memo—has shaped how governments and Fortune 500s approach artificial intelligence. With popular tools like the Declaration on AI Consciousness, and the ASI checklist, Alan continues to illuminate humanity’s AI evolution. Technical highlights.This page last updated: 18/Sep/2024. https://lifearchitect.ai/leta/↑