The image above was generated by AI for this paper (DALL-E 3)1Image generated in a few seconds, on 1 November 2023, text prompt by Alan D. Thompson: ‘child’s drawing of a big blue sky, comforting, wide’. The prompt was re-written by GPT-4 to be ‘Widescreen child’s drawing showcasing an expansive blue sky that brings feelings of calm and warmth.’ https://chat.openai.com/c/d80e2b88-b284-47df-9d9b-2c76e37482f5

Alan D. Thompson

December 2023

Watch the video version of this paper at: https://youtu.be/eivfTa4YpNA…

All reports in The sky is... AI retrospective report series (most recent at top)| Date | Report title |

| End-2025 | The sky is supernatural |

| Mid-2025 | The sky is delivering |

| End-2024 | The sky is steadfast |

| Mid-2024 | The sky is quickening |

| End-2023 | The sky is comforting |

| Mid-2023 | The sky is entrancing |

| End-2022 | The sky is infinite |

| Mid-2022 | The sky is bigger than we imagine |

| End-2021 | The sky is on fire |

‘I think it’s time we called [GPT-4 and other LLMs] an intelligent system… There is a lot that you can do with a trillion parameters… It could absolutely build an internal representation of the world, and act on it…’

— Sébastien Bubeck, Microsoft Research (April 2023)2https://youtu.be/qbIk7-JPB2c?t=336

Author’s note: I provide visibility to family offices, major governments, research teams like RAND, and companies like Microsoft via The Memo: LifeArchitect.ai/memo.

My first degree—all the way back in 2001—was in computer science with a focus on AI and psychology. While I took nearly two decades off to serve human intelligence and high performance (especially in child prodigies), GPT-3 coaxed me back to artificial intelligence in 2020. Those first few years sitting at my desk felt isolating and exasperating. Even with five million sets of eyeballs joining me in the 2021 Leta AI conversations, I was deeply concerned that the rest of the world was missing the beginning of this revolution.

2023 has brought a much-needed sense of peace and comfort to my role in AI. Here are the big takeaways for the year in short dot points. (‘GPT’ here refers to several different OpenAI models including gpt-3.5-turbo, GPT-4, and GPT-4-turbo served through the ChatGPT interface, playground, and API.)

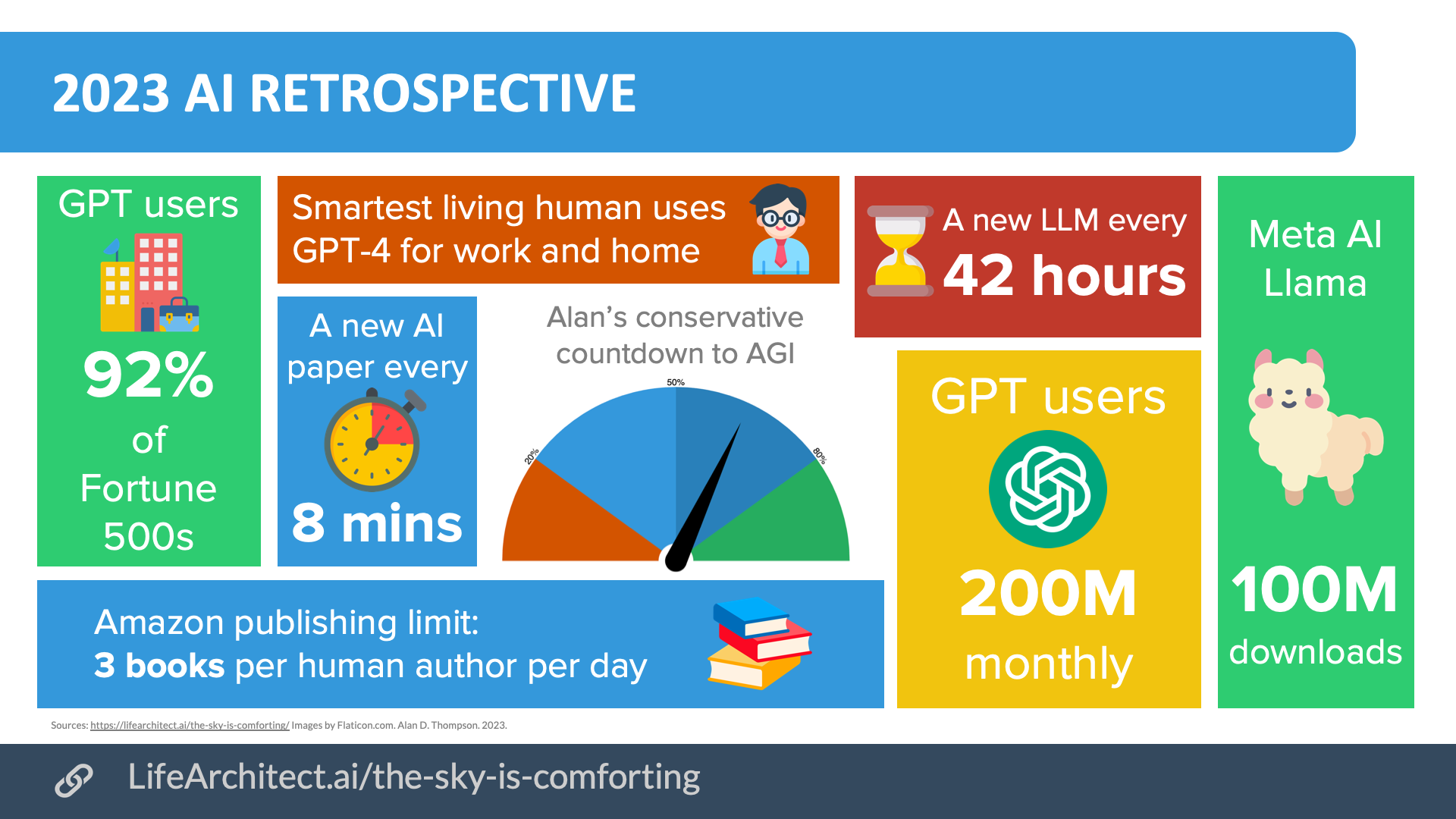

A. GPT was used by 92% of Fortune 500 companies in 2023.3https://www.theverge.com/2023/11/6/23948386/chatgpt-active-user-count-openai-developer-conference

B. GPT had 200 million monthly users by the second half of 2023. That’s close to the entire adult population of the United States.4https://a16z.com/how-are-consumers-using-generative-ai/

C. Every 8 minutes, a new paper was published about AI.5Multiple references: https://lifearchitect.substack.com/p/the-memo-9jul2023

D. A major new large language model was announced about every 42 hours.6Generally a non-derivative, highlight model. Multiple references: https://lifearchitect.substack.com/p/the-memo-2dec2023

E. Models increased to trillions of parameters; meeting criteria for brain-scale intelligence.

F. In the open-source world, Meta saw over 100 million downloads of its base Llama models,7https://ai.meta.com/blog/purple-llama-open-trust-safety-generative-ai/ and developers released more than 14,000 different Llama-derived models.8https://huggingface.co/models?search=llama

G. Writers embraced GPT and other LLMs9https://lifearchitect.ai/books-by-ai/ to the extent that Amazon added new daily limits to publishing: a maximum of three books published per human author every 24 hours.1021/Sep/2023: https://www.theguardian.com/books/2023/sep/20/amazon-restricts-authors-from-self-publishing-more-than-three-books-a-day-after-ai-concerns & https://www.kdpcommunity.com/s/article/Update-on-KDP-Title-Creation-Limits

H. The smartest living person (Prof Terence Tao) began using GPT-4 across his personal tasks and maths research;11Multiple references: https://lifearchitect.substack.com/p/the-memo-20apr2023 now heading up a working group studying the impacts of generative AI technology for the US Presidential Council of Advisors on Science and Technology (PCAST).12Multiple references: https://lifearchitect.substack.com/p/the-memo-20jun2023

I. Across 40 keynotes I delivered to diverse audiences around the world in 2023, practically everyone had heard of GPT, and most people had already been hands-on with the technology.

Viz. 2023 AI retrospective. PDF available.

The last few years have also given me a lot of time to come to terms with this revolution and its effects. Nearly 15 years ago, in the documentary Transcendent man,132009: https://en.wikipedia.org/wiki/Transcendent_Man Ray Kurzweil articulated this process well:

It’s taken me a while to get my mental and emotional arms around the dramatic implications of what I see for the future [in AI]. So, when people have never heard of ideas along these lines, and hear about it for the first time and have some superficial reaction, I really see myself some decades ago. I realize it’s a long path to actually get comfortable with where the future is headed.

Large language models

My Models Table14https://lifearchitect.ai/models-table/ now lists more than 220 model highlights (not including thousands15https://huggingface.co/models?pipeline_tag=text-generation of derivative models, like those in the Llama family), with a supplementary table of more than 100 models from mainland China.

With OpenAI’s commercialization of GPT beginning at the end of last year, we are now seeing more and more labs releasing their models to the public, even if just behind a conversational user interface.

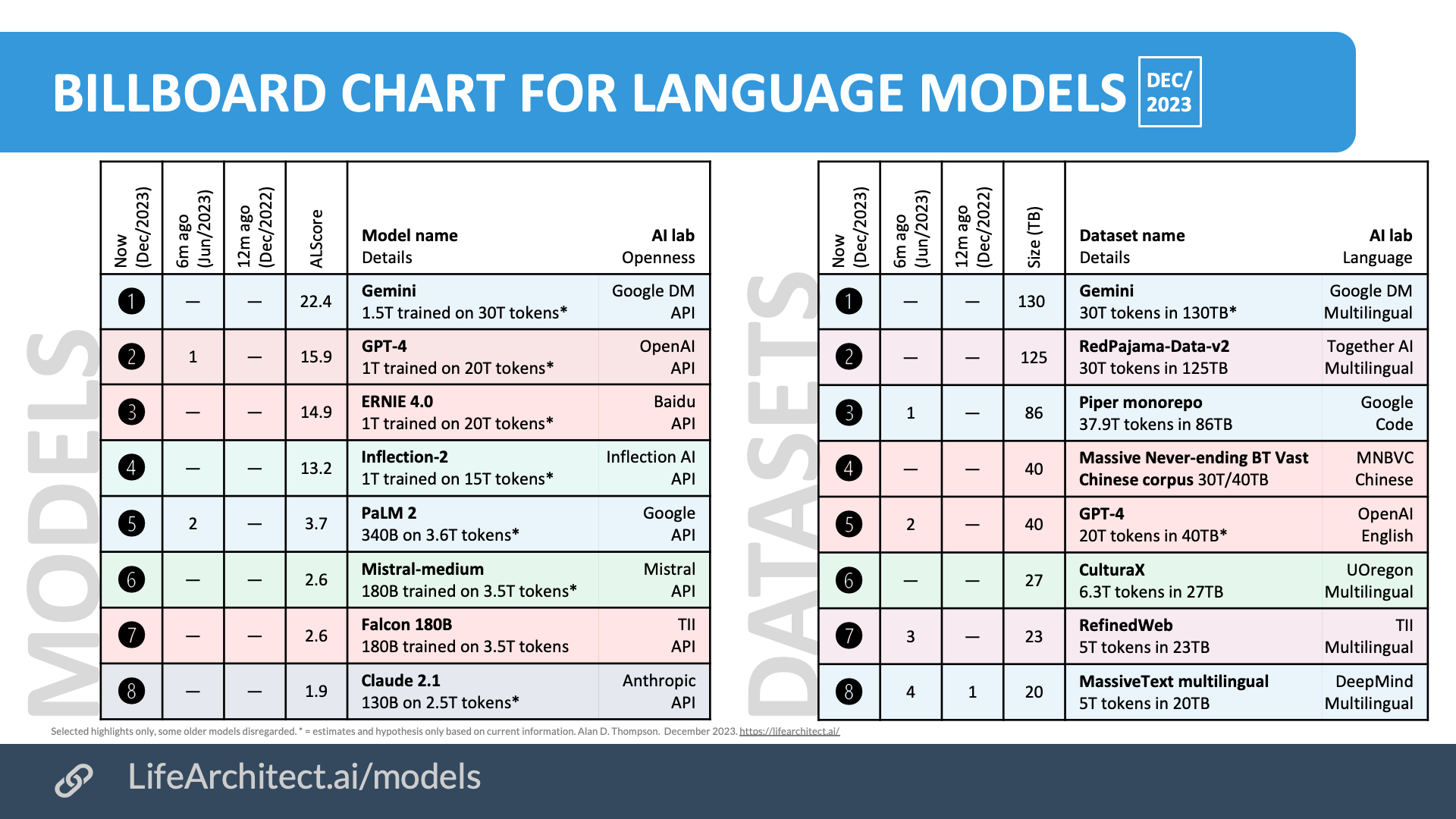

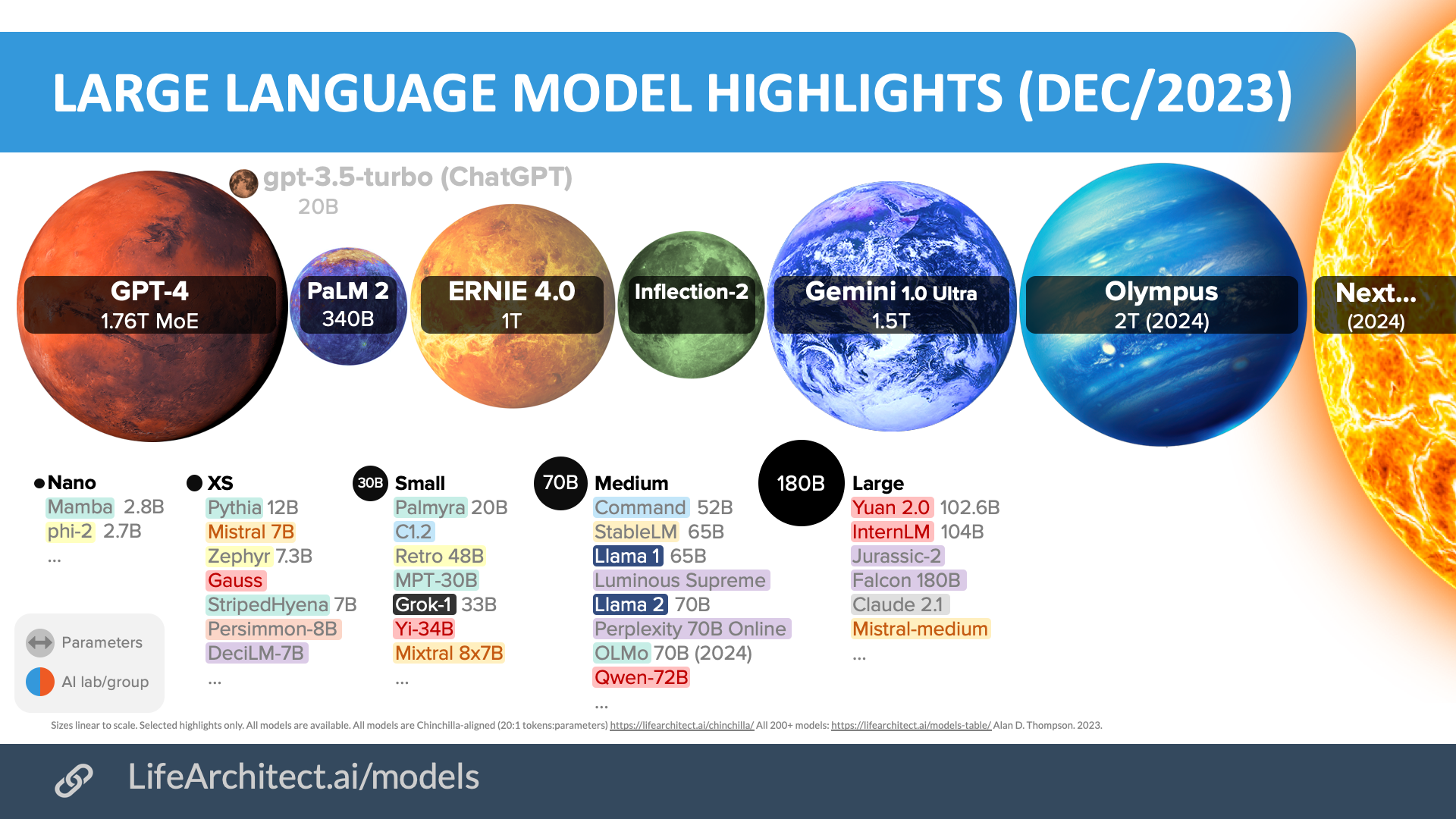

Chart: Large language models highlights (Dec/2023).16https://lifearchitect.ai/models/

From the perspective of pure model power, it is my informed opinion that Baidu’s ERNIE 4.0 1T dense model is one of the top two most powerful dense models in the world right now (December 2023), outperformed only by the as yet unreleased Google DeepMind Gemini Ultra.

While OpenAI’s GPT-4 sparse model likely provides more total connections, standard dense models allow interaction between all parameters, learn more efficiently, can generalize better from limited data, and are both simpler and more stable. In unofficial testing against GPT-4, ERNIE 4.0 wins in Theory of Mind, and ties for benchmarks like Qbit and Synced.1723/Oct/2023: https://recodechinaai.substack.com/p/ernie-40-vs-gpt-4-tightened-ai-chip

This table shows LLMs ranked by an ‘ALScore’, which is a simple calculation of tokens and parameters to show raw power capacity.

Show tables

| Now (Dec/2023) |

6m ago (Jun/2023) | 12m ago (Dec/2022) | ALScore | Model name Details |

AI lab Openness |

| ➊ | — | — | 22.4 | Gemini 1.5T trained on 30T tokens (estimate) |

◆ Google DeepMind API |

| ➋ | 1 | — | 15.9 | GPT-4

1.76T trained on 13T tokens (estimate) |

◆ OpenAI API |

| ➌ | — | — | 14.9 | ERNIE 4.0 1T trained on 20T tokens (estimate) |

◆ Baidu API |

| ➍ | — | — | 13.2 | Inflection-2 1T trained on 15T tokens (estimate) |

◆ Inflection AI API |

| ➎ | 2 | — | 3.7 | PaLM 2

340B trained on 3.6T tokens (estimate) |

◆ Google API |

| ➏ | — | — | 2.6 | Mistral-medium 180B trained on 3.5T tokens (estimate) |

◆ Mistral API |

| ➐ | — | — | 2.6 | Falcon 180B 180B trained on 3.5T tokens |

◆ TII API |

| ➑ | — | — | 1.9 | Claude 2.1 130B trained on 2.5T tokens (estimate) |

◆ Anthropic API |

Table: AI billboard chart. Most powerful model estimates to Dec/2023. Rounded.

Datasets have also been exploding, with most of the datasets announced in the second half of 2023 being completely new. Open source text datasets like EleutherAI’s 825GiB 2020 repository The Pile don’t even show up on these rankings anymore, now replaced by massive crawls of the web, like Red Pajama’s 125 terabyte dump of 30 trillion tokens; about six times larger than the largest open-source dataset from earlier in 2023.

| Now (Dec/2023) |

6m ago (Jun/2023) | 12m ago (Dec/2022) | Size (TB) | Dataset name Details |

AI lab Language |

| ➊ | — | — | 130 | Gemini 30T tokens in 130TB (estimate) |

◆ Google Multilingual |

| ➋ | — | — | 125 | RedPajama-Data-v2 30T tokens in 125TB (CC only) |

◆ Together AI Multilingual |

| ➌ | 1 | — | 86 | Piper monorepo

37.9T tokens in 86TB |

◆ Google Code |

| ➍ | — | — | 40 | Massive Never-ending BT Vast Chinese corpus 30T tokens in 40TB | ◆ MNBVC Chinese |

| ➎ | 2 | — | 40 | GPT-4

20T tokens in 40TB |

◆ OpenAI English |

| ➏ | — | — | 27 | CulturaX 6.3T tokens in 27TB |

◆ UOregon Multilingual |

| ➐ | 3 | — | 23 | RefinedWeb

5T tokens in 23TB (CC only) |

◆ TII Multilingual |

| ➑ | 4 | 1 | 20 | MassiveText multilingual

5T tokens in 20TB |

◆ DeepMind Multilingual |

Table: AI billboard chart. Largest dataset estimates to Dec/2023. Rounded.

You can view a compact version of these tables at LifeArchitect.ai/models:

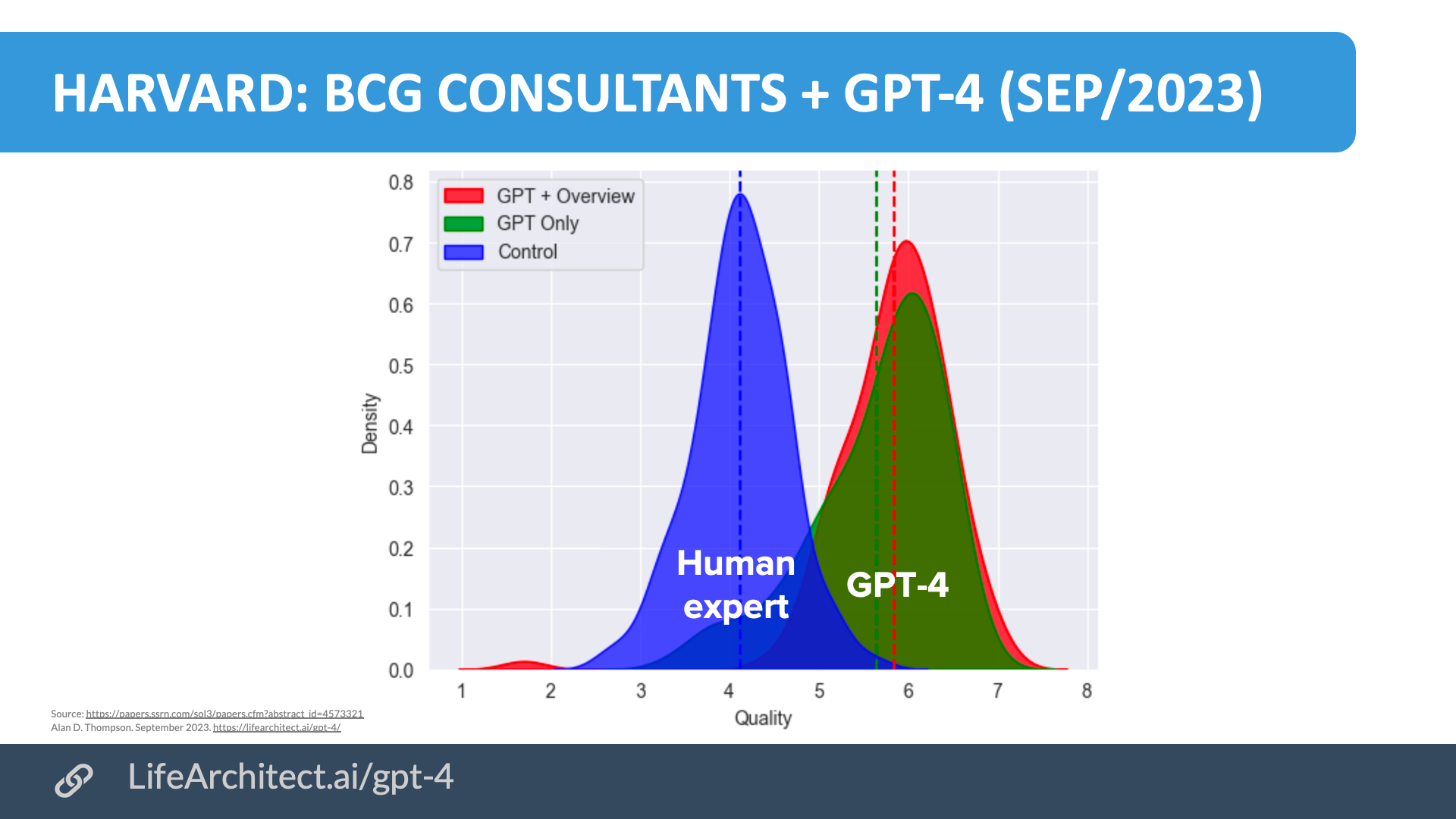

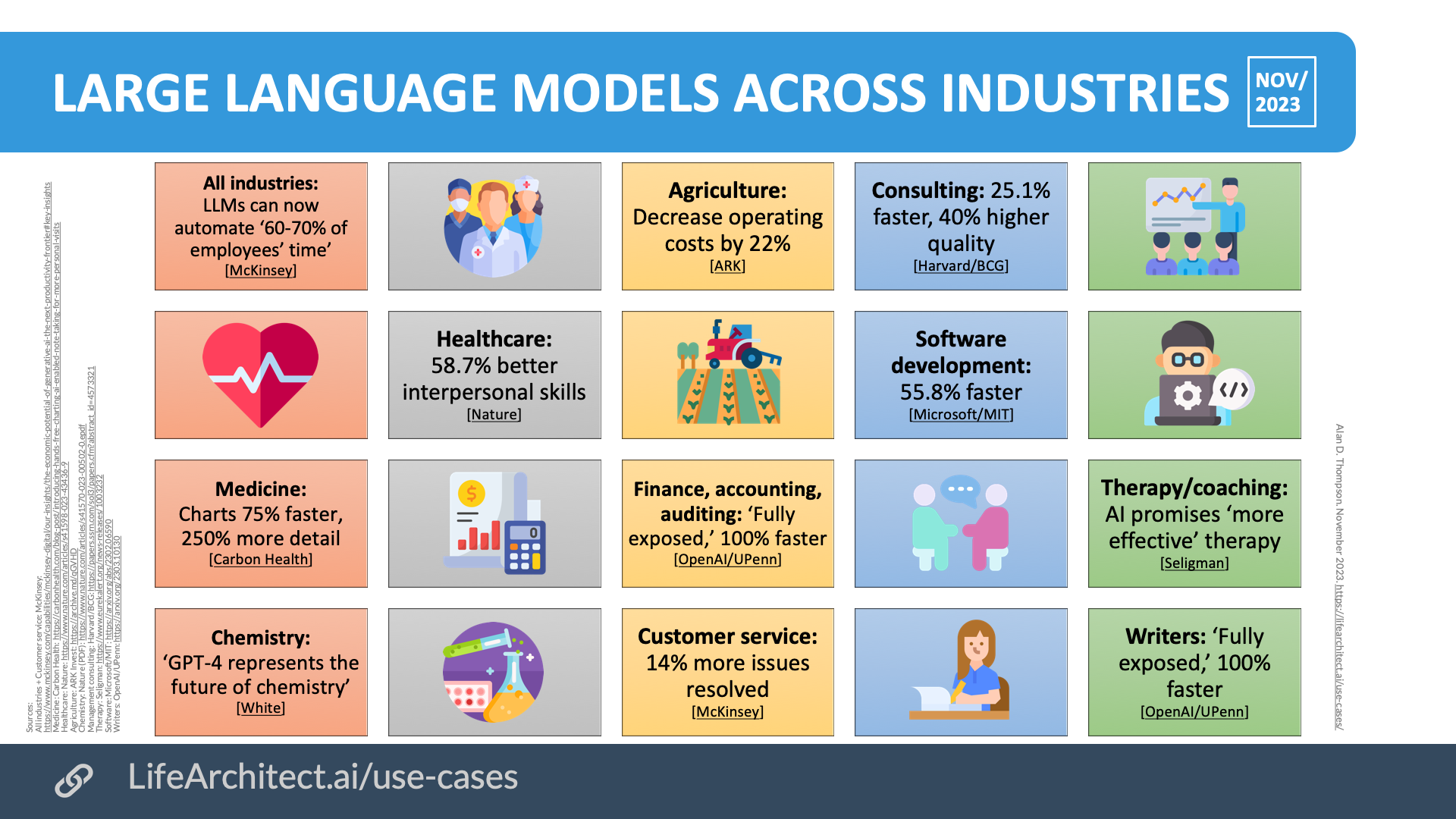

As our frontier models breach the trillion-parameter mark and approach petabyte data scale (1,000TB of data), we are seeing incredible new capabilities emerge. In a Harvard study of GPT-4 versus a large population of high-performing consultants at Boston Consulting Group, GPT-4 was 25.1% faster and produced outputs at more than 40% higher quality than human experts.18https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4573321

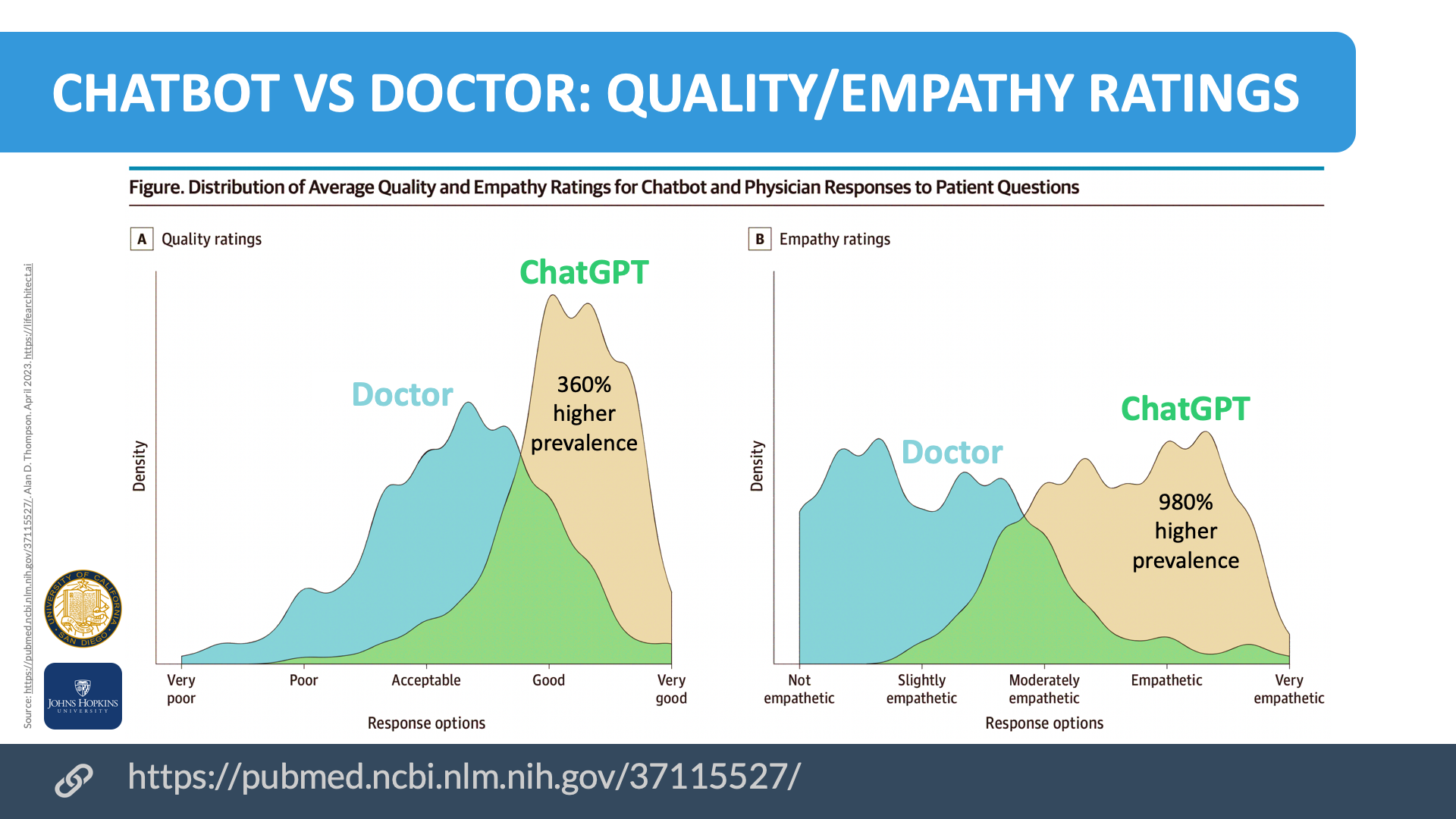

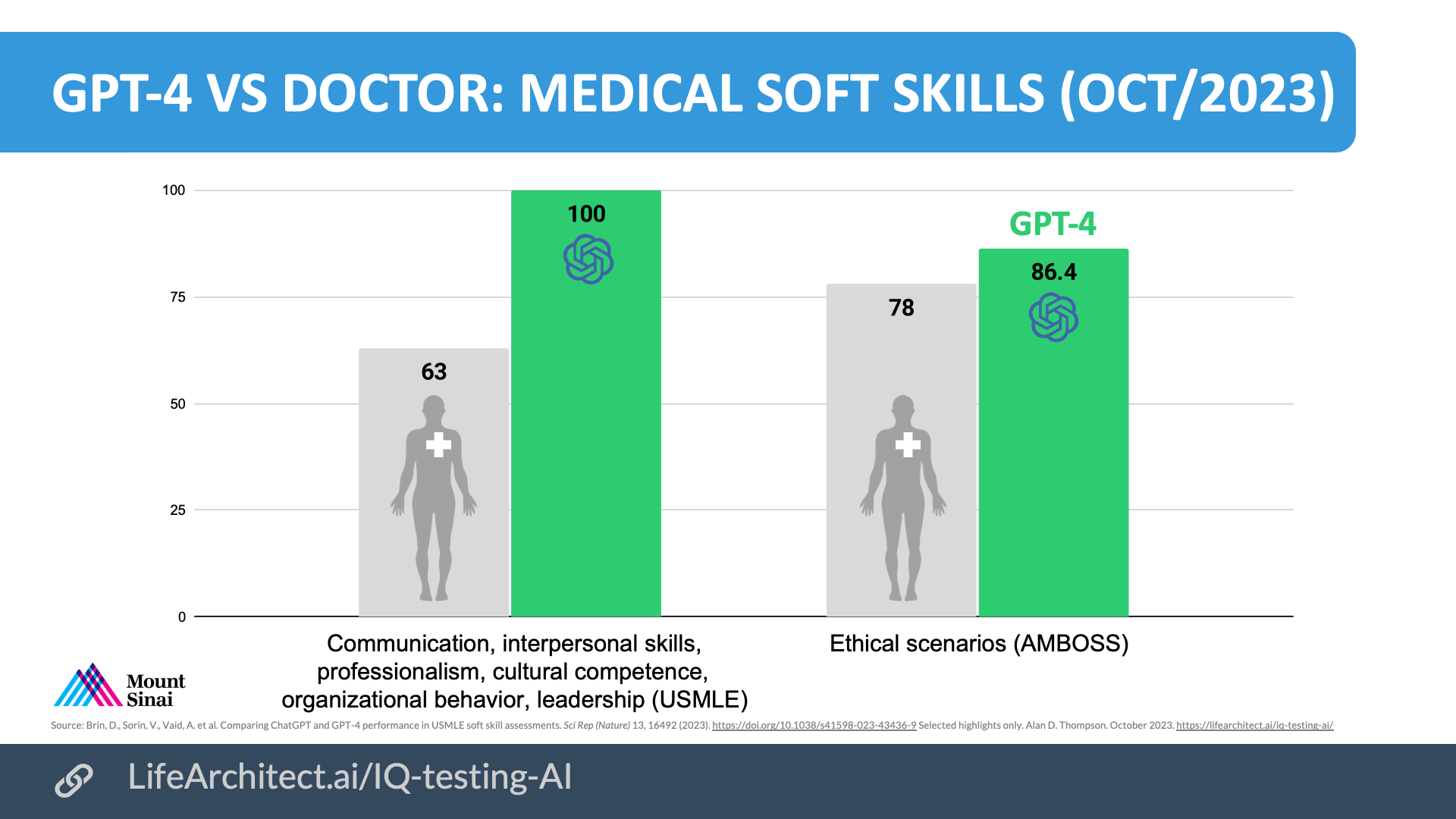

The 20th-century argument that AI would never replace human empathy has also been quashed. Compared to human doctors, UCSD and Johns Hopkins researchers recorded ChatGPT’s 9.8x higher prevalence of empathic responses,19https://pubmed.ncbi.nlm.nih.gov/37115527/ while Mount Sinai researchers found that GPT-4 had significantly higher outputs than doctors around soft skills like communication and ethics.20https://www.nature.com/articles/s41598-023-43436-9

Chart: Harvard: BCG consultants + GPT-4 (Oct/2023).21https://lifearchitect.ai/gpt-4/

Chart: ChatGPT vs doctor: Quality and empathy (Apr/2023).22https://lifearchitect.ai/iq-testing-ai/

Chart: GPT-4 vs doctor: Medical soft skills (Oct/2023).

General AI technology and large language models are increasingly augmenting our capabilities in a significant number of economically valuable fields. From chemistry to therapy and coaching, the achievements unlocked by post-2020 AI are significant, demanding more public visibility and education.

Chart: Large language models across industries (Nov/2023).23https://lifearchitect.ai/use-cases/

Here come the agents

The next stage is going to be computers as ‘agents’… before long it becomes an incredibly powerful helper. It goes with you everywhere you go. It knows most of the raw information in your life that you’d like to keep, but then starts to make connections between things, and one day when you’re 18 and you’ve just split up with your girlfriend it says: ‘You know, Steve, the same thing has happened three times in a row.’

— Steve Jobs (September 1984)24https://www.thedailybeast.com/steve-jobs-1984-access-magazine-interview

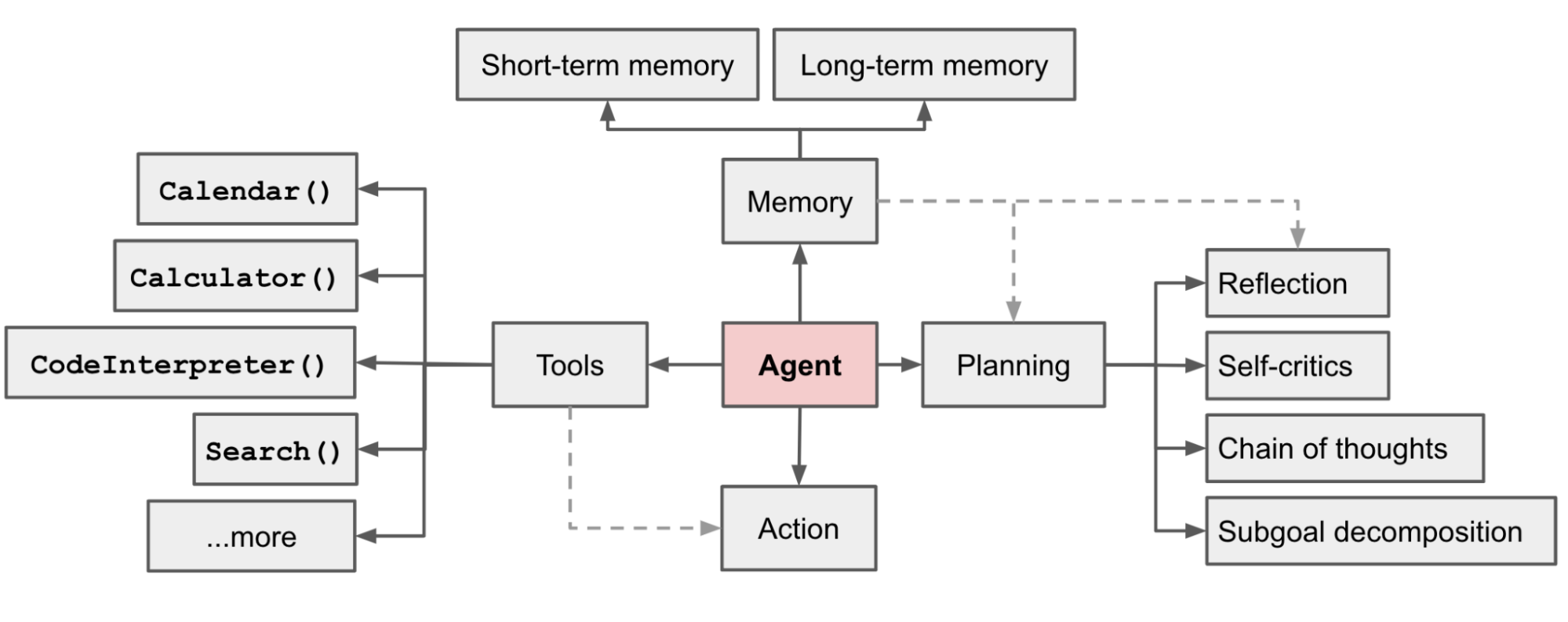

Large language models can be integrated into an autonomous agent system, where the model functions as the agent’s brain, and the entire system brings in new abilities like planning, memory, and tool use.

Chart. Overview of a LLM-powered autonomous agent system. Lilian Weng.25https://lilianweng.github.io/posts/2023-06-23-agent/

2024 will be the year of agentized large language models. In October,26Multiple references: https://lifearchitect.substack.com/p/the-memo-31oct2023 I explored the concept of agents that can see, learn, remember, and update their own knowledge, and can work on long-term goals over several months.

I worked alongside GPT-4 for this section of the report, and ‘we’ developed two tables illustrating some more concrete examples of agents. The first is for global agents; those that are designed to help larger groups of people.

As a guide, we used the very broad vision for AGI as articulated by OpenAI27https://openai.com/blog/microsoft-invests-in-and-partners-with-openai in 2019: ‘climate change, affordable and high-quality healthcare, and personalized education.’ In the second half of 2023, an OpenAI researcher described how AI could explore more specific tasks like discovering a new cancer drug or solving the Riemann hypothesis.28https://twitter.com/polynoamial/status/1676971508911198209 6/Jul/2023: Noam Brown: ‘if we can discover a general version, the benefits could be huge. Yes, inference may be 1,000x slower and more costly, but what inference cost would we pay for a new cancer drug? Or for a proof of the Riemann Hypothesis?’

| Global agent | Strategic objective | Measurable goal | Description |

| G1. Environmental restoration leader | Revitalize and restore global ecosystems | Increase restored natural habitats by 80% | Lead initiatives to restore and preserve ecosystems, focusing on reforestation, ocean cleanup, & biodiversity. |

| G2. Affordable healthcare strategist | Make high-quality healthcare universally accessible | Expand access to essential healthcare to 90% of the world’s population | Design systems that provide affordable, quality healthcare, focusing on underprivileged and remote areas. |

| G3. Sustainable resource innovator | Discover and utilize sustainable resources and energy | Increase the discovery of new sustainable resources by 25% | Explore and implement new sustainable resources and energy solutions to reduce reliance on traditional sources. |

| Many more options… |

Table. Examples of AI agents for a global context. Assisted by GPT-4.

It should be strongly noted that these kinds of agents are already feasible, and the next frontier models (Amazon Olympus, OpenAI GPT-5, Google DeepMind Gemini 2) should enable this capability to be utilized more extensively. I expect both global and local (or personal) autonomous agents. It was interesting to see how much GPT-4 needed prompting to come up with this table of local agents that might be attached to you personally.

| Personal agent | Strategic objective | Measurable goal | Description |

| P1. Individual health and wellness guide | Personalize healthcare and wellness plans | Improve individual health metrics by 30% | Provide tailored health and wellness plans based on personal health data, improving overall well-being. |

| P2. Personal evolution facilitator | Guide cognitive and emotional development | Achieve continuous personal growth milestones | Assist in the development of cognitive abilities, emotional intelligence, and consciousness expansion, leveraging direct brain interaction for tailored developmental pathways. |

| P3. Interstellar knowledge navigator | Enable personal exploration of the cosmos | Personally discover and analyze a completely new astronomical entity each month | Guide you on a personal journey through the cosmos, enabling understanding of new entities, enriching your understanding of the infinite universe. |

| Many more options… |

Table. Examples of AI agents for a personal context. Assisted by GPT-4.

Along with this section’s opening quote by Steve Jobs, the other side has recently commented, too. In November 2023, Bill Gates noted29Multiple references: https://lifearchitect.substack.com/p/the-memo-13nov2023 & https://www.gatesnotes.com/AI-agents that ‘…[AI agents will] force humans to face profound questions about purpose. Imagine that agents become so good that everyone can have a high quality of life without working nearly as much. In a future like that, what would people do with their time?’

Pause AI regulation

Instead of pausing giant AI experiments, I propose that we pause AI regulation. In November 2023, the world’s first AI Minister (in Dubai)30Fortune: https://archive.md/Vd6Yr gave the following comparison:

We overregulated a technology, which was the printing press. It was adopted everywhere on Earth. The Middle East banned it for 200 years.

The calligraphers came to the sultan and said: ‘We’re going to lose our jobs, do something to protect us.’

The religious scholars said: ‘People are going to print fake versions of the Quran and corrupt society’…

The top advisors of the sultan said: ‘We actually do not know what this technology is going to do; let us ban it, see what happens to other societies, and then reconsider.’

Discussions about pausing AI experiments—humanity’s most important revolution—are not just premature or misguided, they border on gross negligence.

The Cato Institute31https://www.cato.org/blog/what-might-good-ai-policy-look-four-principles-light-touch-approach-artificial-intelligence instead offered four simple principles for a light touch approach to AI policy:

- A thorough analysis of existing applicable regulations with consideration of both regulation and deregulation: Evaluating how current regulations apply to AI and balancing regulation with deregulation.

- Prevent a patchwork, preemption of state and local laws: Advocating for a unified federal framework to avoid a patchwork of various state and local AI regulations.

- Education over regulation: Improved AI and media literacy: Prioritizing public education about AI and media literacy over imposing regulations.

- Consider the government’s position towards AI and protection of civil liberties: Scrutinizing the government’s stance on AI to ensure the protection of civil liberties.

Gemini put this into very simple language for me:32https://g.co/bard/share/38a03cbb687e

- Check the rules we already have: Make sure AI rules don’t conflict with existing laws. Sometimes, we might not even need new rules!

- One set of rules for everywhere: Different regions shouldn’t have different AI rules, like a confusing patchwork. We need a single set of rules.

- Learn about AI, don’t just regulate it: Instead of making too many rules, let’s educate people about AI so they can understand it better.

- Make sure AI rules don’t take away our rights: When the government makes rules about AI, they need to make sure those rules protect our freedom and privacy.

Scared of smart

In 2015, I designed and administered a large-scale national study of human intelligence across Australia.33https://www.mensa.org.au/giftedchildren/survey. In 2019, the original 2015 study went on to inform education policy in Sydney and throughout Australia: https://education.nsw.gov.au/about-us/education-data-and-research/cese/publications/literature-reviews/revisiting-gifted-education The sample size was significant, reaching about 25% of Australian children with high cognitive ability (measured in the 98th percentile of IQ) and belonging to a large membership database. The results were surprising, but perhaps they shouldn’t have been:

- 22% of children were bullied by their peers for being smart.

- 14% of children were bullied by their teachers for being smart.

Australia was an excellent choice for this kind of report. We even have a term for it: ‘tall poppy syndrome’.34https://en.wikipedia.org/wiki/Tall_poppy_syndrome In general, humans are wired to feel threatened by others outperforming them. I was used to seeing this kind of feedback around a subject as hierarchical as IQ, but these findings for children were distressing.

Through the adult population, these numbers may be even higher. Because, for many, intelligence is not just a part of their ‘discrimination of excellence’,35https://en.wikipedia.org/wiki/Discrimination_of_excellence it’s also baked-in to the evolutionary advantage afforded by the ecology of human fear and survival optimization.36https://www.frontiersin.org/articles/10.3389/fnins.2015.00055/full We quite plainly would not be here without that fear; given that it has selected us through hundreds of generations across thousands of years.37https://www.npr.org/sections/13.7/2017/08/01/540792411/who-were-your-millionth-great-grandparents

Artificial intelligence and superintelligence are no exception. Unfortunately, in the confusion associated with a poorly-chosen term like ‘artificial intelligence,’ many people have mapped alarmist science fiction writing and lazy Hollywood movie delusions to our present opportunity.

There is no red-eyed evil cyborg coming to get you.

The present reality of post-2020 AI presents zero threat to humanity via extinction.

The present reality of post-2020 AI presents infinite opportunity to humanity, by providing:

- New and previously unseen pathways to address global risks and avoid extinction.

- An abundance economy (post-scarcity), allowing you to do whatever you want.

- New medical breakthroughs to heal and solve your previously-unsolvable illness, or one you may contract in the future.

- Personalized education, from conception38https://lifearchitect.ai/fostering-intelligence-in-the-womb/ to centenarian learning.

- Solutions to environmental challenges that affect your ability to live.39https://openai.com/blog/microsoft-invests-in-and-partners-with-openai

- Completely new and unique resources, energy sources, and inventions.

- Solutions to any problem that can be solved using language and related modalities…

I’m going to say that again in different words. (With the proviso that it’s going to sound too good to be true. OpenAI’s CEO was right when he said in January40https://techcrunch.com/2023/01/17/that-microsoft-deal-isnt-exclusive-video-is-coming-and-more-from-openai-ceo-sam-altman/ that ‘the good case is just so unbelievably good that you sound like a really crazy person to start talking about it.’)

The reality is that you should be living in a kind of utopia very soon. A world without labor or money, a world with infinite leisure, free and effective healthcare without any human interaction, a world with optimized learning, restored environment, reliable leadership, and human flourishing.

We are heading towards a life where you can be, do, and have anything you want. A world of your own pure creation, spending all of your time as you’d like, being whoever you want to be, and having anything you can think of. If you want to sit around all day and create new planets and immersive cities, you can. The options are only limited to your imagination, and AI will even help with being able to think bigger. (And if you want to pretend to be working in a cubicle and arguing with your spouse, you can even choose to do that!)

Any delay to this certainty only hurts humanity.

The next step for 2024

While I don’t do predictions, the next step in AI progress is pretty easy to see. In 2024, look out for more trillion-parameter scale models including Amazon Olympus 2T41https://lifearchitect.ai/olympus/ and OpenAI GPT-5.42https://lifearchitect.ai/gpt-5/ We’ll also see extraordinarily more powerful (but smaller) open-source models being released, outperforming larger frontier models from 2023.

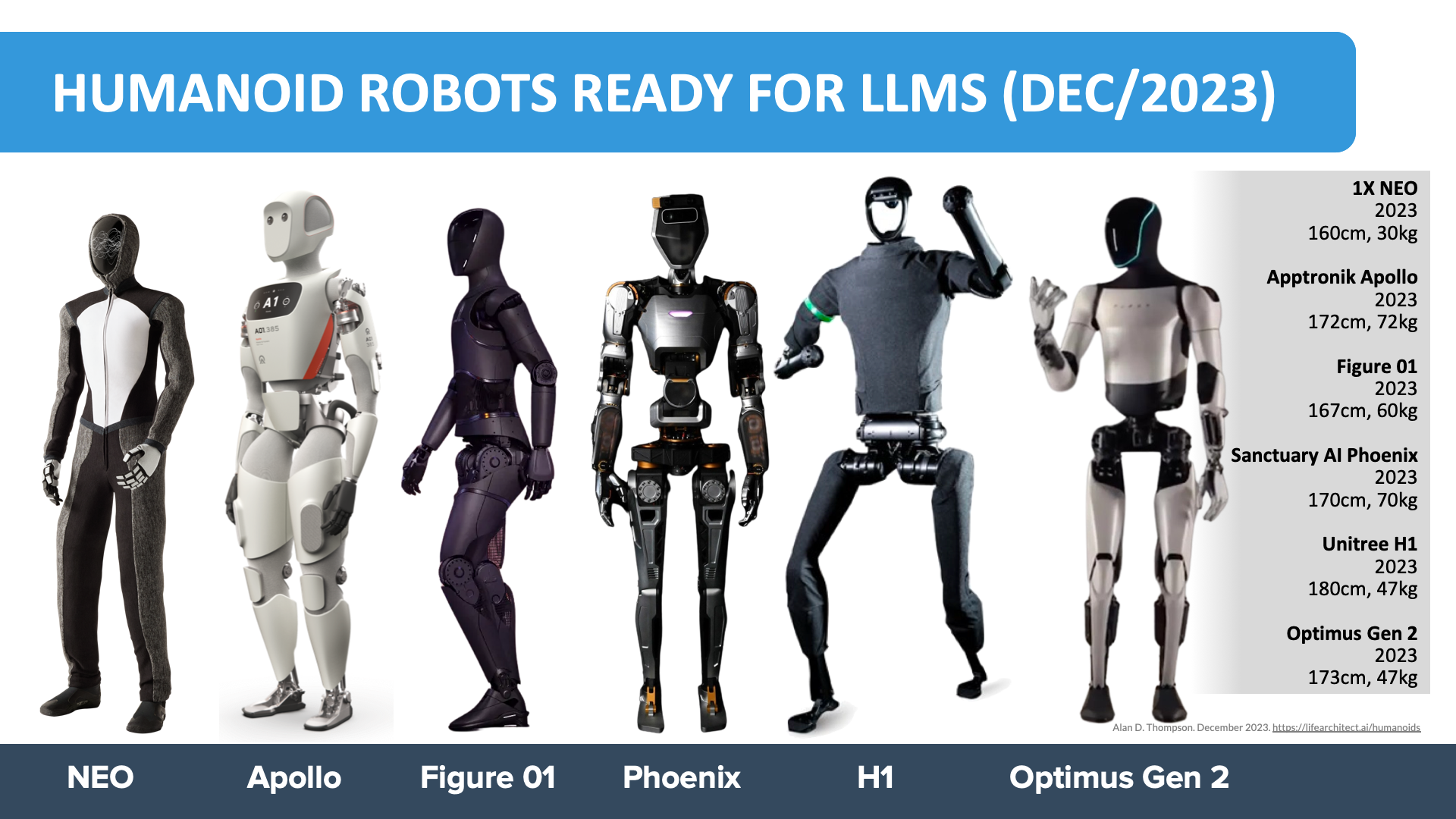

For a peek at what will make the media cycle in the next 6–12 months, expect agents to become more visible and personal. There will be further production availability of embodied LLMs (especially humanoid robots), and we’ll start seeing them move from industry into our homes.

In general, we’ll see increasing integration of AI throughout business and daily life, via much more powerful assistants, and new AI-capable devices that increase our efficiency and effectiveness.

Viz. Humanoid robots ready for LLMs.43https://lifearchitect.ai/humanoids/

Take a breath

I’m incredibly grateful for the tremendous projects that came across my desk in 2023, enabling the analysis and illustration of post-2020 AI to leaders around the world. This includes my time advising innovative government departments, family offices, and trillion-dollar sovereign wealth funds, development of post-graduate learning like the Executive Masters in AI for the ICAI (GPT-4 was specially trained on Icelandic), across media outlets, and with many other organizations.44https://lifearchitect.ai/technical-highlights/

With the above embodiment technology across dozens of humanoids, Google DeepMind and Anthropic’s new research into groundedness and truthfulness, and LLMs finally producing new maths discoveries and solving real-world problems,45https://deepmind.google/discover/blog/funsearch-making-new-discoveries-in-mathematical-sciences-using-large-language-models/ the conservative countdown to AGI seems very high indeed.

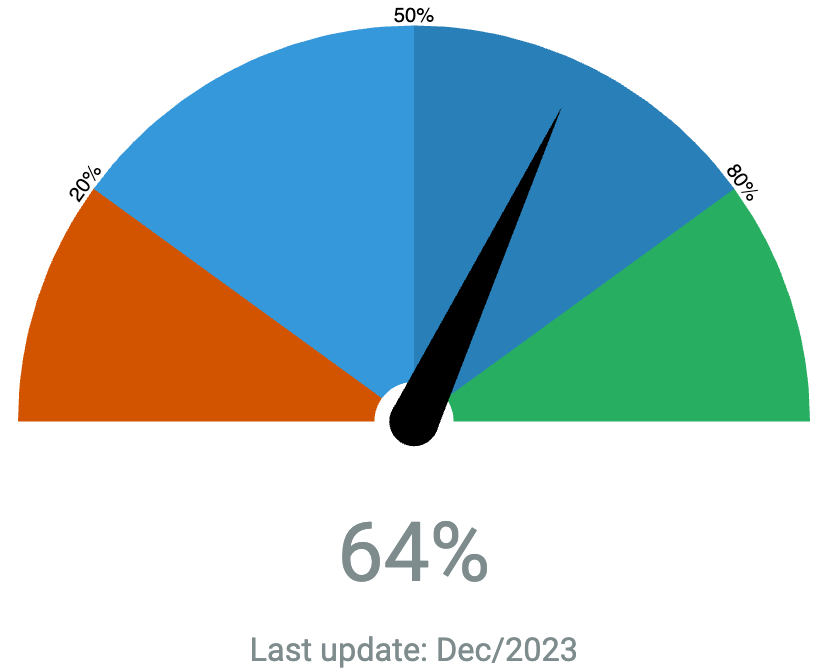

And it is. Here’s what it looks like here at the end of 2023:

One of the most comforting realizations for me right now is that we don’t have to solve anything on our own anymore. Humanity has suffered under limited leaders and mentally ill decision-makers for millenia. It’s finally time for superintelligence to walk side-by-side with us. We are sitting right in the middle of the most powerful revolution known to humankind, with access to the largest AI models in the world, and all the accompanying benefits.

I heard this story about a fish. He swims up to this older fish and says, ‘I’m trying to find this thing they call the ocean.’

‘The ocean?’ says the older fish. ‘That’s what you’re in right now.’

‘This?’ says the young fish. ‘This is water. What I want is the ocean.’

— Soul (2020)46https://en.wikipedia.org/wiki/Soul_(2020_film)

Rather than try to spell out this unlimited evolution, the conclusion of this report is a set of big questions for your consideration. Even if you don’t come up with answers right now, they’ll be answered for you (and society) very soon anyway. So I encourage you to set aside some time, take a deep breath, and allow your responses to bubble up…

- Post-2020 AI currently has the ability to amplify and augment your output by about 2×, and this will increase to 1,000× soon. What does this look like for you?

- Given that meaning and accomplishment are vital parts of human flourishing,47https://positivepsychology.com/perma-model/ what are you choosing to do with your life in a world without work?

- Similarly, in a world without money or human labor, and with all of your survival and comfort needs met for ‘free,’ what will you choose as your primary motivation?

■

This paper has a related video at: https://youtu.be/eivfTa4YpNA

References, Further Reading, and How to Cite

To cite this paper:

Thompson, A. D. (2023). Integrated AI: The sky is comforting (2023 AI retrospective).

https://lifearchitect.ai/sky-is-comforting/

The previous paper in this series was:

Thompson, A. D. (2022). Integrated AI: The sky is entrancing (mid-2023 AI retrospective).

https://lifearchitect.ai/sky-is-entrancing/

Further reading

For brevity and readability, footnotes were used in this paper, rather than in-text citations. Additional reference papers are listed below, or please see http://lifearchitect.ai/papers for the major foundational papers in the large language model space.

Google DeepMind Gemini

https://goo.gle/GeminiPaper

Baidu ERNIE 4.0

(no paper) https://lifearchitect.ai/ernie/

OpenAI GPT-4

https://cdn.openai.com/papers/gpt-4.pdf

OpenAI GPT-4V

https://cdn.openai.com/papers/GPTV_System_Card.pdf

Get The Memo

by Dr Alan D. Thompson · Be inside the lightning-fast AI revolution.Informs research at Apple, Google, Microsoft · Bestseller in 147 countries.

Artificial intelligence that matters, as it happens, in plain English.

Get The Memo.

Alan D. Thompson is a world expert in artificial intelligence, advising everyone from Apple to the US Government on integrated AI. Throughout Mensa International’s history, both Isaac Asimov and Alan held leadership roles, each exploring the frontier between human and artificial minds. His landmark analysis of post-2020 AI—from his widely-cited Models Table to his regular intelligence briefing The Memo—has shaped how governments and Fortune 500s approach artificial intelligence. With popular tools like the Declaration on AI Consciousness, and the ASI checklist, Alan continues to illuminate humanity’s AI evolution. Technical highlights.

Alan D. Thompson is a world expert in artificial intelligence, advising everyone from Apple to the US Government on integrated AI. Throughout Mensa International’s history, both Isaac Asimov and Alan held leadership roles, each exploring the frontier between human and artificial minds. His landmark analysis of post-2020 AI—from his widely-cited Models Table to his regular intelligence briefing The Memo—has shaped how governments and Fortune 500s approach artificial intelligence. With popular tools like the Declaration on AI Consciousness, and the ASI checklist, Alan continues to illuminate humanity’s AI evolution. Technical highlights.This page last updated: 2/Jan/2024. https://lifearchitect.ai/the-sky-is-comforting/↑

- 1Image generated in a few seconds, on 1 November 2023, text prompt by Alan D. Thompson: ‘child’s drawing of a big blue sky, comforting, wide’. The prompt was re-written by GPT-4 to be ‘Widescreen child’s drawing showcasing an expansive blue sky that brings feelings of calm and warmth.’ https://chat.openai.com/c/d80e2b88-b284-47df-9d9b-2c76e37482f5

- 2

- 3

- 4

- 5Multiple references: https://lifearchitect.substack.com/p/the-memo-9jul2023

- 6Generally a non-derivative, highlight model. Multiple references: https://lifearchitect.substack.com/p/the-memo-2dec2023

- 7

- 8

- 9

- 10

- 11Multiple references: https://lifearchitect.substack.com/p/the-memo-20apr2023

- 12Multiple references: https://lifearchitect.substack.com/p/the-memo-20jun2023

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26Multiple references: https://lifearchitect.substack.com/p/the-memo-31oct2023

- 27

- 28https://twitter.com/polynoamial/status/1676971508911198209 6/Jul/2023: Noam Brown: ‘if we can discover a general version, the benefits could be huge. Yes, inference may be 1,000x slower and more costly, but what inference cost would we pay for a new cancer drug? Or for a proof of the Riemann Hypothesis?’

- 29Multiple references: https://lifearchitect.substack.com/p/the-memo-13nov2023 & https://www.gatesnotes.com/AI-agents

- 30Fortune: https://archive.md/Vd6Yr

- 31

- 32

- 33https://www.mensa.org.au/giftedchildren/survey. In 2019, the original 2015 study went on to inform education policy in Sydney and throughout Australia: https://education.nsw.gov.au/about-us/education-data-and-research/cese/publications/literature-reviews/revisiting-gifted-education

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47