A Comprehensive Analysis of Datasets Used to Train GPT-1, GPT-2, GPT-3, GPT-NeoX-20B, Megatron-11B, MT-NLG, and Gopher

A Comprehensive Analysis of Datasets Used to Train GPT-1, GPT-2, GPT-3, GPT-NeoX-20B, Megatron-11B, MT-NLG, and Gopher

Alan D. Thompson

LifeArchitect.ai

March 2022

26 pages incl title page, references, appendix.

Current datasets and updates since publication

Obviously a lot has changed since publication of this report back in Mar/2022. For the latest updates, see the Datasets Table.

Open the Datasets Table in a new tab

Update Sep/2024: OpenAI now allows people to review their training dataset under very strict conditions (Hollywood Reporter, 24/Sep/2024).

Update Feb/2024: A new paper by the South China University of Technology, ‘Datasets for Large Language Models: A Comprehensive Survey’ provides an alternative view of major training datasets (181 pages, 28/Feb/2024).

The 2022 paper

Reviews

Received by AllenAI (AI2).

Received by the UN.

Cited in lit review paper by USyd + USTC.

Abstract

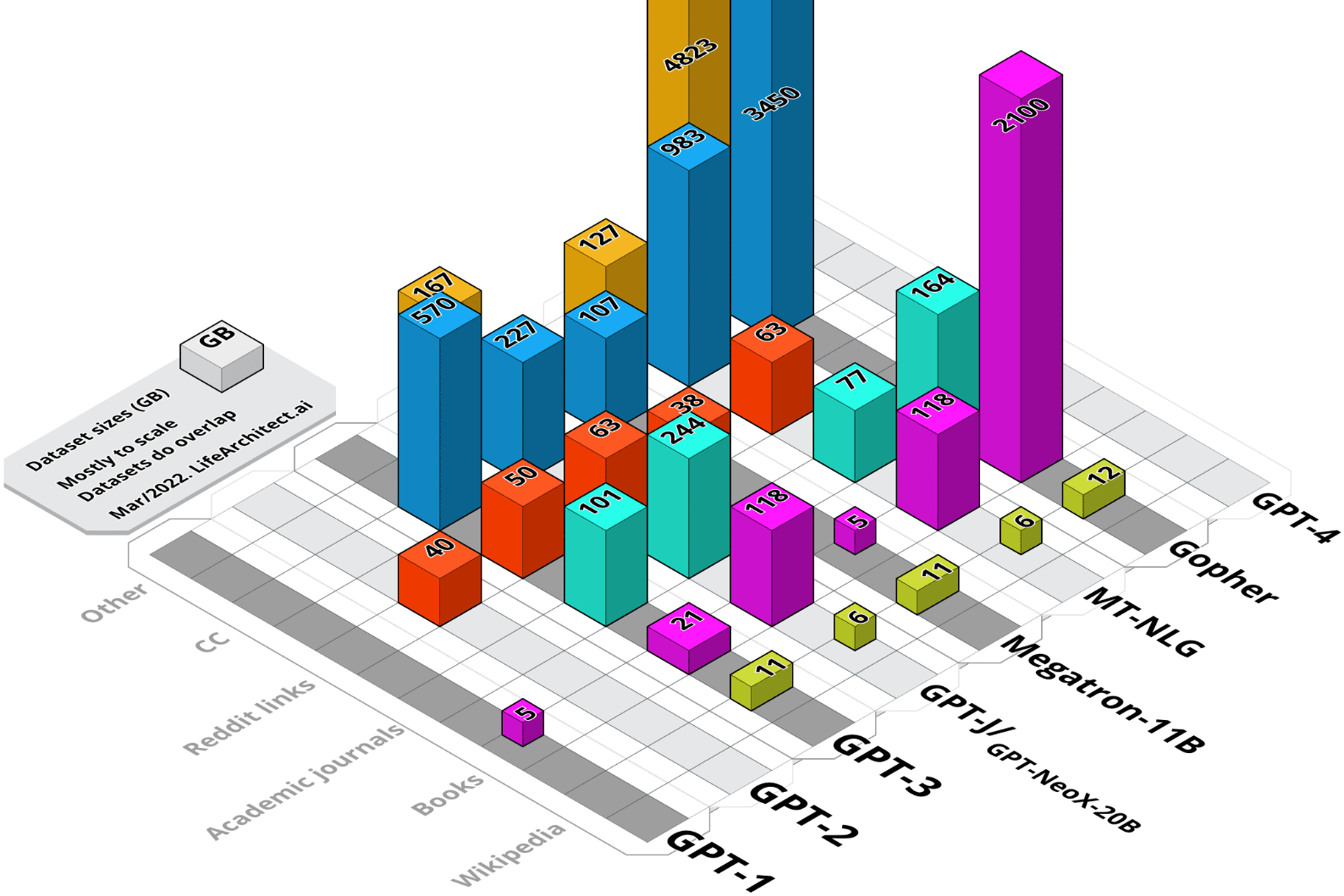

Pre-trained transformer language models have become a stepping stone towards artificial general intelligence (AGI), with some researchers reporting that AGI may evolve from our current language model technology. While these models are trained on increasingly larger datasets, the documentation of basic metrics including dataset size, dataset token count, and specific details of content is lacking. Notwithstanding proposed standards for documentation of dataset composition and collection, nearly all major research labs have fallen behind in disclosing details of datasets used in model training. The research synthesized here covers the period from 2018 to early 2022, and represents a comprehensive view of all datasets—including major components Wikipedia and Common Crawl—of selected language models from GPT-1 to Gopher.

Contents

1. Overview

1.1. Wikipedia

1.2. Books

1.3. Journals

1.4. Reddit links

1.5. Common Crawl

1.6. Other

2. Common Datasets

2.1. Wikipedia (English) Analysis

2.2. Common Crawl Analysis

3. GPT-1 Dataset

3.1. GPT-1 Dataset Summary

4. GPT-2 Dataset

4.1. GPT-2 Dataset Summary

5. GPT-3 Datasets

5.1. GPT-3: Concerns with Dataset Analysis of Books1 and Books2

5.2. GPT-3: Books1

5.3. GPT-3: Books2

5.4. GPT-3 Dataset Summary

6. The Pile v1 (GPT-J & GPT-NeoX-20B) datasets

6.1. The Pile v1 Grouped Datasets

6.2. The Pile v1 Dataset Summary

7. Megatron-11B & RoBERTa Datasets

7.1. Megatron-11B & RoBERTa Dataset Summary

8. MT-NLG Datasets

8.1. Common Crawl in MT-NLG

8.2. MT-NLG Grouped Datasets

8.3. MT-NLG Dataset Summary

9. Gopher Datasets

9.1. MassiveWeb Dataset Analysis

9.2. Gopher: Concerns with Dataset Analysis of Wikipedia

9.3. Gopher: No WebText

9.4. Gopher Grouped Datasets

9.5. Gopher Dataset Summary

10. Conclusion

11. Further reading

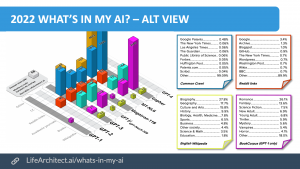

Appendix A: Top 50 Resources: Wikipedia + CC + WebText (i.e. GPT-3)

Download PDF of alt view (1920×1080 slide)

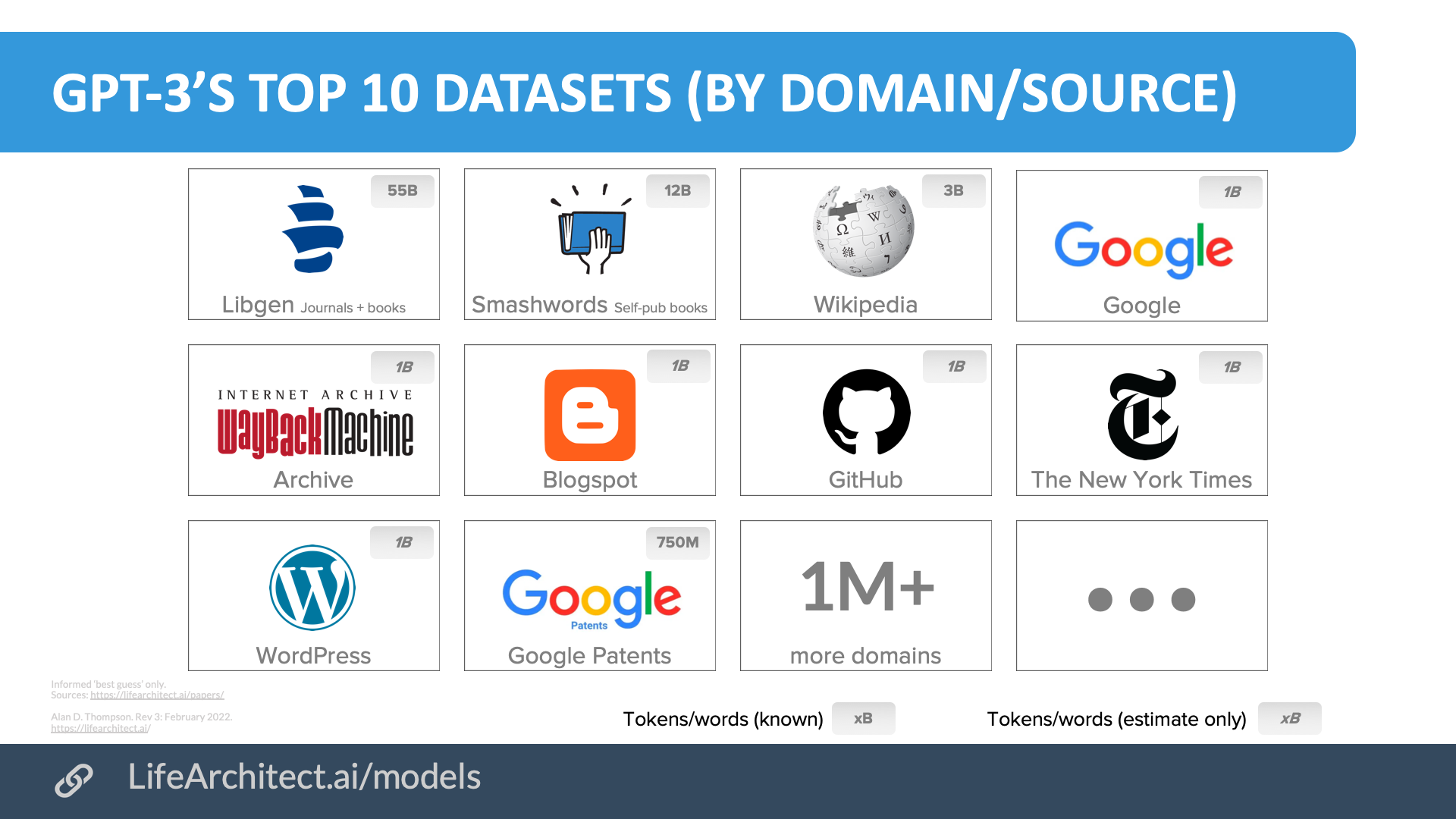

GPT-3’s top 10 datasets by domain/source

Download source (PDF)

Contents: View the data (Google sheets)

Video: Presentation of this paper @ Devoxx Belgium 2022

All dataset reports by LifeArchitect.ai (most recent at top)| Date | Type | Title |

| Dec/2025 | 📑 | Genesis Mission |

| Jan/2025 | 📑 | What's in Grok? |

| Jan/2025 | 💻 | NVIDIA Cosmos video dataset |

| Aug/2024 | 📑 | What's in GPT-5? |

| Jul/2024 | 💻 | Argonne AuroraGPT |

| Sep/2023 | 📑 | Google DeepMind Gemini: A general specialist |

| Feb/2023 | 💻 | Chinchilla data-optimal scaling laws: In plain English |

| Aug/2022 | 📑 | Google Pathways |

| Mar/2022 | 📑 | What's in my AI? |

| Sep/2021 | 💻 | Megatron the Transformer, and related language models |

| Ongoing... | 💻 | Datasets Table |

Get The Memo

by Dr Alan D. Thompson · Be inside the lightning-fast AI revolution.Informs research at Apple, Google, Microsoft · Bestseller in 147 countries.

Artificial intelligence that matters, as it happens, in plain English.

Get The Memo.

Alan D. Thompson is a world expert in artificial intelligence, advising everyone from Apple to the US Government on integrated AI. Throughout Mensa International’s history, both Isaac Asimov and Alan held leadership roles, each exploring the frontier between human and artificial minds. His landmark analysis of post-2020 AI—from his widely-cited Models Table to his regular intelligence briefing The Memo—has shaped how governments and Fortune 500s approach artificial intelligence. With popular tools like the Declaration on AI Consciousness, and the ASI checklist, Alan continues to illuminate humanity’s AI evolution. Technical highlights.

Alan D. Thompson is a world expert in artificial intelligence, advising everyone from Apple to the US Government on integrated AI. Throughout Mensa International’s history, both Isaac Asimov and Alan held leadership roles, each exploring the frontier between human and artificial minds. His landmark analysis of post-2020 AI—from his widely-cited Models Table to his regular intelligence briefing The Memo—has shaped how governments and Fortune 500s approach artificial intelligence. With popular tools like the Declaration on AI Consciousness, and the ASI checklist, Alan continues to illuminate humanity’s AI evolution. Technical highlights.This page last updated: 26/Sep/2024. https://lifearchitect.ai/whats-in-my-ai/↑