An Exploration of the Pathways Architecture from PaLM to Parti

An Exploration of the Pathways Architecture from PaLM to Parti

Alan D. Thompson

LifeArchitect.ai

August 2022

24 pages incl title page, references, appendices.

Updates to the Pathways family since publication (most recent at top)

| Date | Model | Notes |

|---|---|---|

| 13/Oct/2023 | PaLI-3 | PaLI-3: paper. |

| 26/Jul/2023 | Med-PaLM M | Med-PaLM Multimodal: paper. |

| 22/Jun/2023 | AudioPaLM | AudioPaLM = PaLM 2 + AudioLM: paper. |

| 10/May/2023 | PaLM 2 | PaLM 2: announce. |

| 29/May/2023 | PaLI-X 55B | PaLI-X 55B: paper. |

| 10/May/2023 | PaLM 2 | PaLM 2: announce. |

| 12/Mar/2023 | Med-PaLM 2 | Med-PaLM 2: announce. |

| 6/Mar/2023 | PaLM-E | PaLM-E is PaLM Embodied with 562B params: paper, research site. |

| 19/Jan/2023 | – | Dr Jeff Dean provided extended commentary in his review of 2022: blog. |

| 26/Dec/2022 | Med-PaLM | Med-PaLM, a medical finetuned model based on Flan-PaLM: paper. |

| 20/Oct/2022 | Flan-PaLM | Flan-PaLM, based on Finetuning language models (Flan): paper. |

| 20/Oct/2022 | U-Palm | U-PaLM, a version of PaLM using less power/hours of compute: paper. |

| 15/Sep/2022 | PaLI 17B | PaLI 17B: Google Pathways Language and Image model: paper. |

| 16/Aug/2022 | PaLM-SayCan | PaLM + Robots: Google PaLM-SayCan: announce, research site, video. |

Reviews

Received by several major governments; used in policy analysis.

Abstract

With over a million subscribed users, GPT-3 and related models have received a lot of press coverage and public attention. Much like a flashy Porsche driving down the Autobahn, these models look impressive, and are performing well. However, it is only a matter of time until they are overtaken by a much larger supercar. And that vehicle is already rapidly approaching. Google Pathways was announced at the end of 2021, and we are seeing several of its components in 2022: beginning with PaLM, PaLM-Coder, Parti, and Minerva. While all of these models are closed—only available for Google’s internal research—it is anticipated that a future Pathways model will be publicly released. This report explores the accomplishments of the Pathways models so far, with PaLM and its related language models already at more than triple the size of GPT-3.

Contents

1. Overview

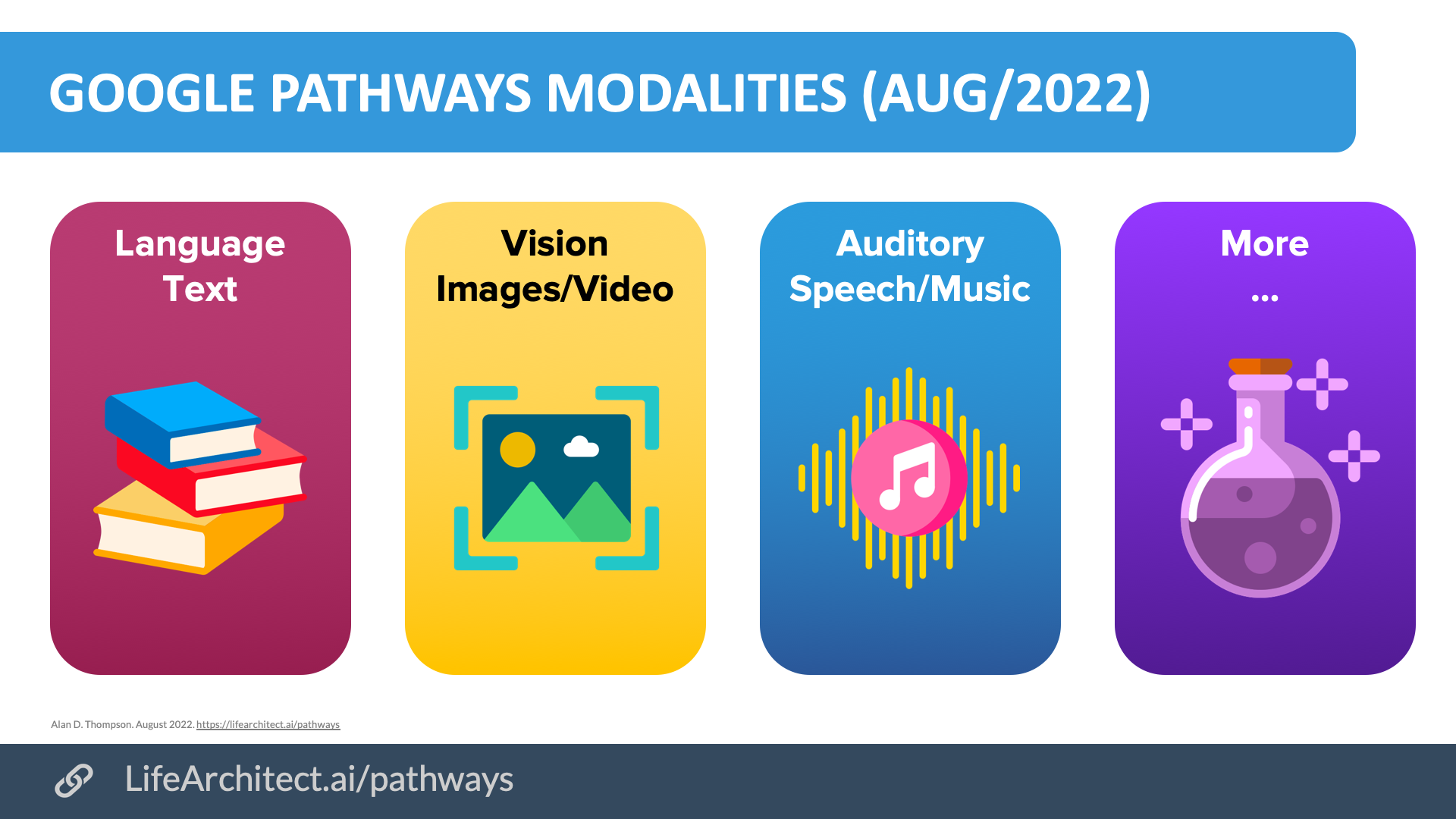

1. Google Pathways (Oct/2021)

2. Google Pathways: The Pathways System (Mar/2022)

3. Google Pathways: PaLM 540B (Apr/2022)

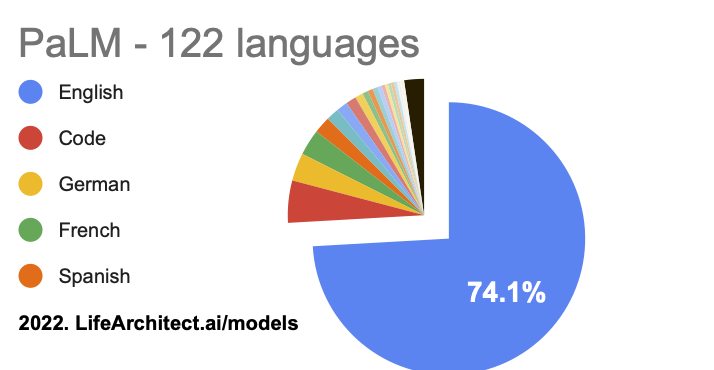

3.1. PaLM Dataset Summary

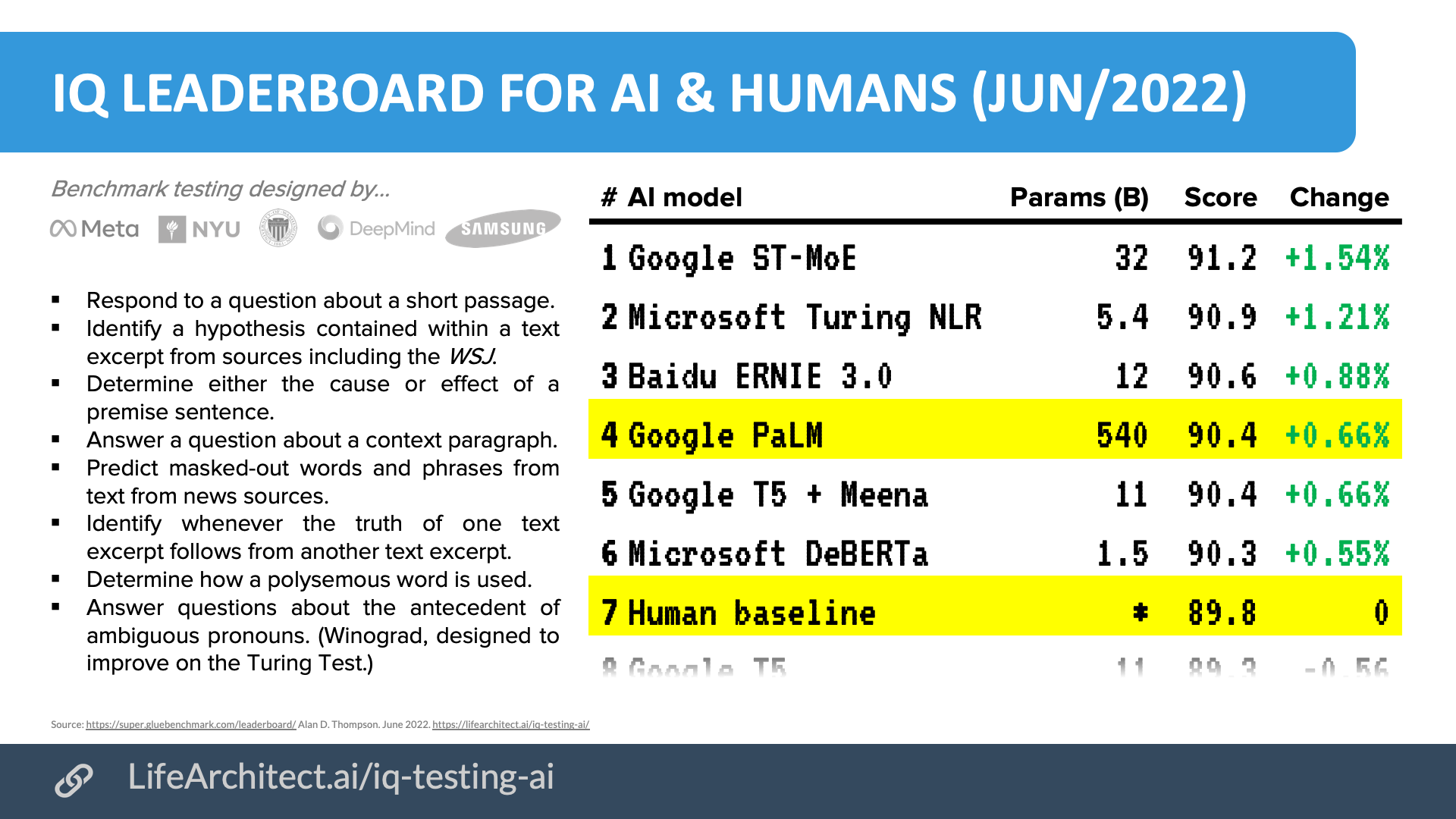

3.2. PaLM Capabilities & Performance

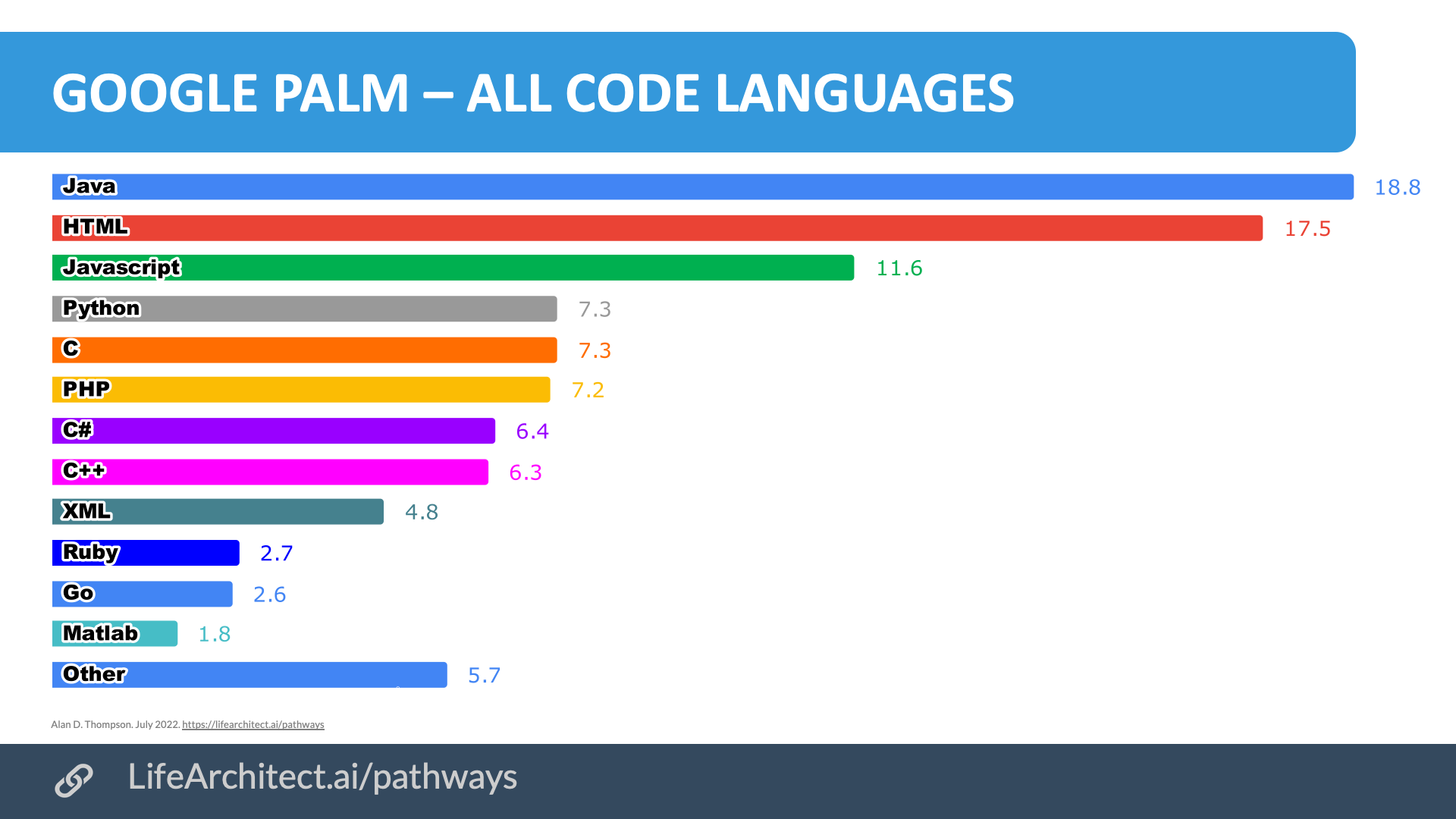

4. Google Pathways: PaLM-Coder 540B (Apr/2022)

5. Google Pathways: Parti 20B (Jun/2022)

6. Google Pathways: Minerva 540B (Jun/2022)

6.1. The Polish National Math Exam

7. Following the Trail to Transformative AI

8. Further reading

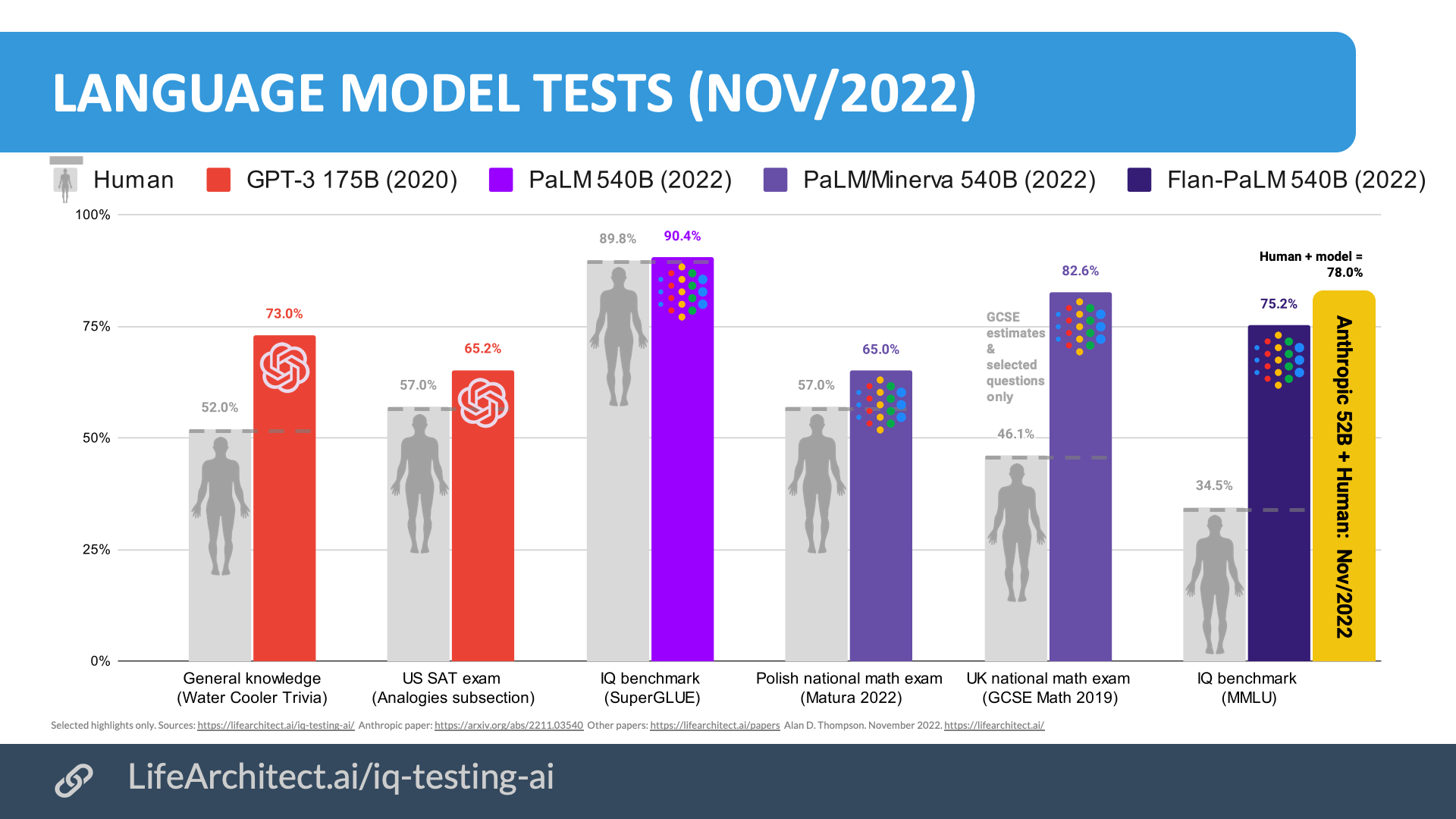

Appendix A: PaLM 540B vs GPT-3 175B vs Jurassic-1 178B vs human

References, Further Reading, and How to Cite

To cite this report:

Thompson, A. D. (2022). Google Pathways: An Exploration of the Pathways Architecture from PaLM to Parti. https://LifeArchitect.ai/pathways

Further reading

For brevity and readability, footnotes were used in this paper, rather than in-text citations. Additional reference papers are listed below, or please see http://lifearchitect.ai/papers for the major foundational papers in the large language model space.

Pathways System announcement (Pathways blog)

Dean, J. (2021). Introducing Pathways: A next-generation AI architecture.

https://blog.google/technology/ai/introducing-pathways-next-generation-ai-architecture/

Pathways System paper

Barham, P., Chowdhery, A., Dean, J., Ghemawat, S., Hand, S., Hurt, D., Isard, M., Lim, H., Pang, R., Roy, S., Saeta, B., Schuh, P., Sepassi, R., Shafey, L. E., Thekkath, C. A., and Wu, Y. (2022). Pathways: Asynchronous Distributed Dataflow for ML. https://arxiv.org/abs/2203.12533

PaLM announcement (PaLM blog)

Narang, S. & Chowdhery, A. (2022). Pathways Language Model (PaLM): Scaling to 540 Billion Parameters for Breakthrough Performance.

https://ai.googleblog.com/2022/04/pathways-language-model-palm-scaling-to.html

PaLM paper (includes PaLM-Coder)

Chowdhery, A., Narang, S., Devlin, J., Bosma, M., Mishra, G., Roberts, A., Barham, P., Chung, H. W., Sutton, C., Gehrmann, S., Schuh, P., Shi, K., Tsvyashchenko, S., Maynez, J., Rao, A., Barnes, P., Tay, Y., Shazeer, N., Prabhakaran, V., Reif, E., Du, N., Hutchinson, B., Pope, R., Bradbury, J., Austin, J., Isard, M., Gur-Ari, G., Yin, P., Duke, T., Levskaya, A., Ghemawat, S., Dev, S., Michalewski, H., Garcia, X., Misra, V., Robinson, K., Fedus, L., Zhou, D., Ippolito, D., Luan, D., Lim, H., Zoph, B., Spiridonov, A., Sepassi, R., Dohan, D., Agrawal, S., Omernick, M., Dai, A. M., Pillai, T. S., Pellat, M., Lewkowycz, A., Moreira, E., Child, R., Polozov, O., Lee, K., Zhou, Z., Wang, X., Saeta, B., Diaz, M., Firat, O., Catasta, M., Wei, J., Meier-Hellstern, K., Eck, D., Dean, J., Petrov, S., and Fiedel, N. (2022). PaLM: Scaling Language Modeling with Pathways. https://arxiv.org/abs/2204.02311

Parti paper

Yu, J., Xu, Y., Koh, J. Y., Luong, T., Baid, G., Wang, Z., Vasudevan, V., Ku, A., Yang, Y., Ayan, B. K., Hutchinson, B., Han, W., Parekh, Z., Li, X., Zhang, H., Baldridge, J., & Wu, Y. Scaling Autoregressive Models for Content-Rich Text-to-Image Generation. https://arxiv.org/abs/2206.10789

Parti demo

Google. (2022). https://parti.research.google/

Minerva announcement (Minerva blog)

Dyer, E., & Gur-Ari, G. (2022). Minerva: Solving Quantitative Reasoning Problems with Language Models.

https://ai.googleblog.com/2022/06/minerva-solving-quantitative-reasoning.html

Minerva paper

Lewkowycz, A., Andreassen, A., Dohan, D., Dyer, E., Michalewski, H., Ramasesh, V., Slone, A., Anil, C., Schlag, I., Gutman-Solo, T., Wu, Y., Neyshabur, B., Gur-Ari, G., & Misra, V. (2022). Solving Quantitative Reasoning Problems with Language Models. https://arxiv.org/abs/2206.14858

Minerva sample demo

Google. (2022). https://minerva-demo.github.io/

| Date | Type | Title |

| Dec/2025 | 📑 | Genesis Mission |

| Jan/2025 | 📑 | What's in Grok? |

| Jan/2025 | 💻 | NVIDIA Cosmos video dataset |

| Aug/2024 | 📑 | What's in GPT-5? |

| Jul/2024 | 💻 | Argonne AuroraGPT |

| Sep/2023 | 📑 | Google DeepMind Gemini: A general specialist |

| Feb/2023 | 💻 | Chinchilla data-optimal scaling laws: In plain English |

| Aug/2022 | 📑 | Google Pathways |

| Mar/2022 | 📑 | What's in my AI? |

| Sep/2021 | 💻 | Megatron the Transformer, and related language models |

| Ongoing... | 💻 | Datasets Table |

Image credit: Thanks to jesssaysno for the header image on this page.

Get The Memo

by Dr Alan D. Thompson · Be inside the lightning-fast AI revolution.Informs research at Apple, Google, Microsoft · Bestseller in 147 countries.

Artificial intelligence that matters, as it happens, in plain English.

Get The Memo.

Alan D. Thompson is a world expert in artificial intelligence, advising everyone from Apple to the US Government on integrated AI. Throughout Mensa International’s history, both Isaac Asimov and Alan held leadership roles, each exploring the frontier between human and artificial minds. His landmark analysis of post-2020 AI—from his widely-cited Models Table to his regular intelligence briefing The Memo—has shaped how governments and Fortune 500s approach artificial intelligence. With popular tools like the Declaration on AI Consciousness, and the ASI checklist, Alan continues to illuminate humanity’s AI evolution. Technical highlights.

Alan D. Thompson is a world expert in artificial intelligence, advising everyone from Apple to the US Government on integrated AI. Throughout Mensa International’s history, both Isaac Asimov and Alan held leadership roles, each exploring the frontier between human and artificial minds. His landmark analysis of post-2020 AI—from his widely-cited Models Table to his regular intelligence briefing The Memo—has shaped how governments and Fortune 500s approach artificial intelligence. With popular tools like the Declaration on AI Consciousness, and the ASI checklist, Alan continues to illuminate humanity’s AI evolution. Technical highlights.This page last updated: 2/Jan/2025. https://lifearchitect.ai/pathways/↑