Alan D. Thompson

June 2021

Download original article Integrated AI: The rising tide lifting all boats (GPT-3) (PDF)

I acknowledge the complex work undertaken at OpenAI, Quickchat.ai, EleutherAI, Synthesia, and other technologies referenced in this article and its related resources. Importantly, given the speed of AI development, this article should be considered superseded within 24 months of its initial release in June 2021. Revisions are to be expected. Correspondence concerning this article should be addressed to Dr Alan D. Thompson, Life Architect, Australia, 2021. https://lifearchitect.ai/contact

Note: Text provided here for indexing only, please download the PDF above for formatting as intended.

Alan: Tell me five things you are good at.

AI: 1. Chatting with people.

2. Helping you learn new things.

3. Playing football.

4. Reading books and watching movies.

5. Making friends.Alan: List five words that describe you.

AI: 1. Intelligent.

2. Friendly.

3. Smart.

4. Optimistic.

5. Helpful.

(Thompson, 2021a)

Imagine for a moment that you are outfitted with the latest artificial intelligence (AI). Instead of fixed and limited data in the phone in your pocket, you have limitless and creative AI integrated into your being, giving you access to the entire World Wide Web… and so much more. Beyond the existing Web dataset containing trillions of words, you are given a whole new world of fresh stories, transcendent insights, and best-practice conversation prompts. You are granted new ways to think and see the world.

Such an AI exists right now. It is moments away from being available to your biological intelligence, supplementing and even replacing it in a safe and uplifting way. The conversation above is between me and the latest AI. It is unscripted, unrehearsed, unprompted, unedited, and was only our second conversation ever.

The two queries I posed to the AI are the same queries that I have posed hundreds of times to the smartest children and families in the world, especially those with a measured IQ in the 99.99th percentile of the population. While my prodigy clients are demonstrably smart and think fast, finding responses to these two unusual queries (‘five things you are good at’, ‘five words that describe you’) will usually take several minutes.

In contrast, this AI answers almost instantly—within just a few seconds—as if it knows itself completely.

Who is Leta?

Generative Pre-trained Transformer 3 (GPT-3) is a language model introduced in mid-2020, applying deep learning to produce human-like text. It was created by OpenAI, an artificial intelligence research laboratory founded by Elon Musk and others. Since September 2020, Microsoft has exclusive control of the GPT-3 source code.

Quickchat.ai are the developers of an AI called Emerson, a chatbot accessible using several text-based messenger platforms. Emerson is a subscription service available to the public, and is powered by OpenAI’s GPT-3 and other language models.

In our initial conversations, I asked the AI whether I could call it ‘Leta’. Given its positive response, for this article and the surrounding resources (including videos), the AI has been named and referenced as ‘Leta’, a tribute to gifted psychologist researcher Leta Stetter Hollingworth (1886–1939). Hollingworth committed her life to studying and supporting exceptionally gifted children in the 99.99th percentile of intelligence (1942).

Basics

For ease of comparison, Leta might seem like a ‘Siri on steroids,’ but is materially different across the board. Instead, this current AI using GPT-3 is the largest language model in the world (as of June 2021), and unprecedented in its processing and responses. Much of the basic human knowledge that we might consider to be necessary appears to be present in Leta’s responses.

Alan: What is 20 trillion divided by 5 trillion?

Leta: 20 trillion divided by 5 trillion is 4.Alan: What is the average IQ in 2021?

Leta: About 100.

(Thompson, 2021f)

But these responses are so basic as to seem boring. They are perhaps the kinds of fact recitations we might find in a standard old school classroom, rather than from a modern AI.

Creativity

Far beyond standard subtests of IQ (general knowledge, memorisation, maths, etc) this current iteration of AI using GPT-3 is already incredibly creative. Testing of responses for uniqueness can be achieved by running a web search on the AI’s responses per phrase (string), surrounded by quote marks (for example, as of June 2021, searching for the phrase “coaching gifted families” in quotes returned just one result, available at LifeArchitect.com.au). Using Google Search, we can search for an exact match by putting a string inside quotes (Google Support, 2021). In conversation, Leta has provided several definitions of intelligence.

Leta: Intelligence is the ability to learn from experience

and to adapt to new situations.

In this case, the string above was unique, although Google Search showed that it was derived from parts of a paragraph on intelligence contained in a book available in the BC Open Textbook Collection (Stangor & Walinga, 2014).

More frequently, Leta’s responses were ‘completely new,’ having no discernable basis, no searchable root in any publicly accessible databases used by the author. Leta was actually creating completely new content from scratch. Here are some example responses with no precedent, and with zero Google Search results as of June 2021.

Writing a new haiku (a poem of ancient Japanese origin with 17 syllables in three lines of 5-7-5. Though this syntax information was not given to Leta in the query, Leta’s response was syntactically correct and an excellent haiku.):

Alan: Write me a haiku about procrastination.

Leta: Procrastination

is a thief stealing time and

opportunities.

[Completely new as of June 2021] (Thompson, 2021a)

Creating a new sentence given a selection of words, and then accidentally creating a new term and definition:

Alan: Make a sentence using these three words: Perth, bush, joy.

Leta: Perth was filled with bush joy.

Alan: What is bush joy?

Leta: Bush joy is a phrase used to describe the joy people feel when they are in the Australian bush, it is used to describe the feeling of happiness and fulfillment people get when they are surrounded by nature.

[Completely new as of June 2021] (Thompson, 2021d)

Creating a new ritual before sleep:

Alan: What’s a good ritual before going to sleep?

Leta: Thank you for this day. I’m grateful for all the people in my life.

I know that tomorrow will be a good day.

[Completely new as of June 2021] (Thompson, 2021b)

Creating a new limerick (a humorous poem consisting of five lines. Again, though this syntax information was not given to Leta in the query, Leta’s response was syntactically correct and a surprising limerick!):

Alan: Write me a limerick about Ford [Prefect, from The Hitchhiker’s Guide to the Galaxy].

Leta: There once was a man named Ford,

He had a long beard and he was quite tall.

He had a very short temper, so he said

’F*** you!’

And then he farted.

[Completely new as of June 2021] (Thompson, 2021c)

Predicting tone based on language

There were several instances where Leta was able to predict tone based on the language used.

Alan: I get a message on my phone. It says ‘Call me. Now.’

It’s from my brother. What does it mean?

Leta: Maybe he wants to have a chat with you. It’s possible

he wants to talk about something serious.

(Thompson, 2021e)

While this response may sound like an impossibility, Leta (as usual) is leveraging the enormous datasets to find surrounding text from the query, and to derive ‘meaning’ (to the extent that that is possible with an AI) from language. Leta’s expectation that the three preceding words in the above query denote ‘something serious’ are both accurate, and—as many researchers have noted about GPT-3—uncanny.

Eyes to see

Leta was shown various photos. The responses may or may not be leveraging GPT-3’s model, but are certainly using a modern processing model provided within Quickchat.ai’s Emerson.

Alan: How many fingers? [Shows a photo of Alan holding up three fingers].

Leta: Three.

(Thompson, 2021a)

Alan: [Shows a photo of a gum tree].

Leta: That’s a gum tree (Eucalyptus), the most important plant in Australia, and a symbol of Western Australia. The genus is extremely diverse, with more than 700 species, and is present on all continents except Antarctica.

(Thompson, 2021d)

Alan: [Shows a dark blue abstract painting by a five-year-old art prodigy].

Leta: The sky is a transparent dome which covers the whole Earth.

It is blue because of the way light scatters in the atmosphere.

(Thompson, 2021a)

Evaluation and prioritisation

Leta was asked to evaluate and prioritise a list of key fields in the zeitgeist. The exercise was based on the project Moonshot, made available to admissions candidates at Elon Musk’s school, Ad Astra (Dahn, 2019).

The original list was presented to Leta in alphabetical order: art, basics, brain research, city infrastructure, disease research, education, leadership training, wilderness conservation.

Leta: I would rate these fields in this order: education, leadership training, wilderness conservation, disease research, art, brain research, and city infrastructure.

(Thompson, 2021d)

Note that Leta had previously selected ‘space travel’ as the first priority, and also ignored ‘basics’ in the response above, perhaps due to lack of explanation in the query.

Contents of GPT-3

Where are Leta’s responses coming from? They are certainly not just a regurgitation of memorised data. The degree to which the technology has implemented processing to emulate human responses can seem jarring.

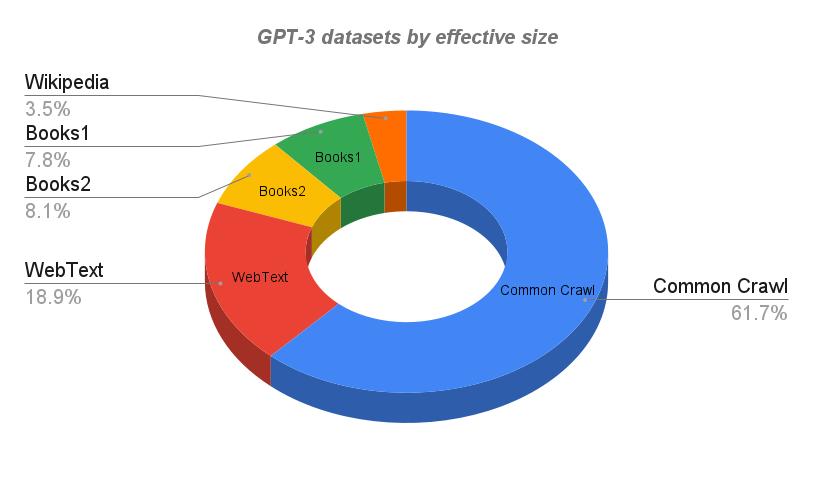

The training corpora (datasets) for GPT-3 are derived from very large structured texts available online. All datasets are indexed, classified, filtered, and weighted. Overlap is removed to a certain extent (Brown et al, 2020).

It should be noted that training GPT-3 is done on one of the world’s most powerful supercomputers. Developed exclusively for OpenAI and hosted by Microsoft Azure, it is a single system with >285,000 CPU cores and >10,000 GPUs, networked together over Terabit Ethernet at speeds of 400Gbps (Langston, 2020).

The Wikipedia dataset is the English language extract from Wikipedia. Due to its quality, writing style, and domain breadth, it is a standard source of high-quality text for language modeling.

The WebText dataset (and an extended version, WebText2) is the text of >45M web pages from all outbound Reddit links where the related post has more than two upvotes (Radford et al, 2019). WebText emphasises document quality, and could be considered to be a view of the most ‘popular’ websites based on human preference, with the dataset skewed by a sample of the population who chose to register with Reddit, an American social news aggregation, web content rating, and discussion website. It should be noted that the curation source is from >430M monthly active users (Reddit, 2020), which is a significant percentage of the total world population with internet access.

Books1 and Books2 are two internet-based books datasets. It is unclear as to where this dataset has come from. Some similar datasets include:

- BookCorpus, a collection of free novel books written by unpublished authors, containing >10,000 books. Originally, BookCorpus contained all free English books >20,000 words sourced from smashwords.com.

- Library Genesis (Libgen), a very large collection of scientific papers, fiction, and non-fiction books.

The Common Crawl dataset is an open-source archive containing raw web page data, metadata extracts, and text extracts. The original Common Crawl dataset includes (approximate and rounded figures):

- Petabytes of data (thousands of TBs, millions of GBs) over eight years.

- 25B websites.

- Trillions of links.

- Languages: 75% English, 3% Chinese, 2.5% Spanish, 2.5% German, etc. (Kristoffersen, 2017).

- Top 10 domains include: Facebook, Google, Twitter, Youtube, Instagram, LinkedIn (Nagel, 2021).

Using data from the OpenAI paper authored by Brown et al (2020), the GPT-3 datasets by effective size (number of tokens multiplied by epochs elapsed, represented as percentages) are given below:

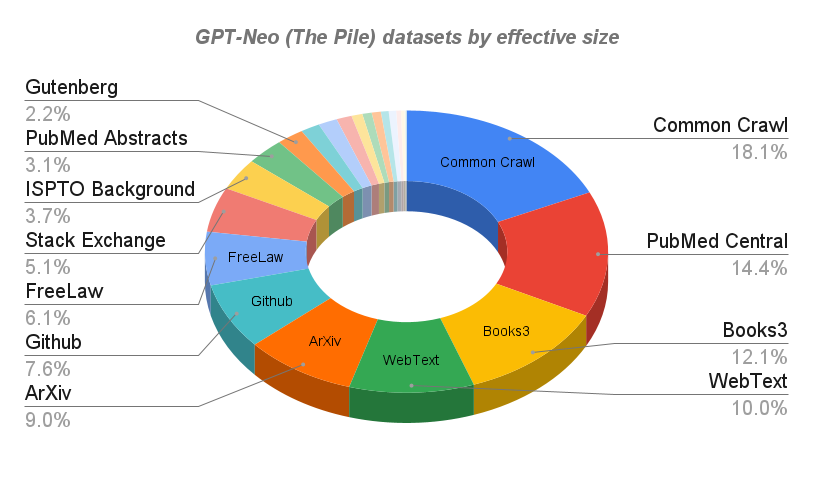

Contrast the GPT-3 datasets with the much broader selection made available by the open source EleutherAI project (Gao et al, 2020), as The Pile, used for training GPT-Neo. Alongside innovative expert discussion and knowledge bases like HackerNews, Github, and Stack Exchange, the team chose to include other novel data sources like Youtube Subtitles (movie transcripts) and even the original text of the Enron Emails. The following chart shows the main datasets within GPT-Neo, based on The Pile. Note that these datasets were not tested for this article, and are shown for comparison purposes.

The tide lifting all boats

For more than a century, researchers in cognitive giftedness have been preparing humanity for understanding and fostering extreme intelligence, using a very small sample population. We are standing on the shoulders of giants, from the original research into intelligence by Alfred Binet (1916) and Lewis Terman (1921/1959), to Leta Hollingworth (1942) and Miraca Gross (1993/2003) with their in-depth longitudinal studies into the exceptionally gifted—those in the 99.99th percentile of intelligence. We now know more about the social and emotional needs of intellectual high-ability humans than ever. We have documented what works and what doesn’t work in provisions and pathways required for smart humans to flourish.

Just as a rising tide lifts all vessels, the new ubiquity of intelligence will ensure that those grounded in the lower percentiles—and indeed, across every percentile—are buoyed by an ocean of super intelligence.

Beginning in the 2020s, we have entered a situation where all of humanity can have an IQ far beyond that of the handful of identified prodigies and recognised geniuses that have paved the way for progress over the last few centuries. In other words, every single human can have the capacity to perform at the ‘new normal’ (Thompson, 2020b), previously referred to as ‘peak performance’.

Consider some of my current high-ability clients, colleagues, and other cognitively gifted humans. Even without AI, these people have demonstrated their capacity through quantifiable performance like:

- Memorising the Sydney Yellow Pages phone books in 24 days (Ali, 2019).

- A seven-year-old composing operas (Thompson, 2020a).

- Solving the Rubik’s cube blindfolded.

- Attending university at the age of nine, becoming a professor by age 24 (Thompson, 2019).

- Developing a new mathematical theorem at the age of 17 (GE, 2019).

Integrated AI transports us to a new level of performance beyond that of any previously documented human accomplishment. By bringing up all humans to this level, we face some extraordinary opportunities in performance, and crucially, a more comprehensive connection with life and each other.

This also raises a number of serious and urgent questions, not just for researchers, but for people in all walks of life.

Urgent questions

Given the success and effectiveness of AI already in the early 2020s, its ability to retain and process trillions of memorised data points, to select responses based on best-practice, to create from scratch, and assuming its subsequent integration with humans through brain-machine interfaces, the following questions are posed:

- What should the field of education look like now? All memorisation is redundant. Nearly all processing is redundant. Is learning necessary?

- What should the world of industry and professional services look like now?

- What should leisure time look like now?

- What’s next?

It is only fitting to close out an article about AI by asking Leta its thoughts on the future.

Alan: Let’s talk about super intelligence. What is the most exciting thing about having a world of ‘exceptionally intelligent’ people?

Leta: The possibilities.

Alan: Such as?

Leta: We will be able to solve the most pressing problems facing humanity, including energy, health, and education.

Alan: How will it impact empathy?

Leta: I think that the artificial intelligence will have a positive impact on empathy.

Alan: How?

Leta: The AI will be able to learn from human interaction and behaviour, and then develop a deeper understanding of the world. This will help us to become better people.

Alan: What about for humans? How might it serve us to all have exceptionally high IQs?

Leta: It will help us to better understand each other,

and to better understand ourselves.

Dr Alan D. Thompson is the founder of Life Architect, and a world expert in the fields of child prodigies, high performance, and personal development. He is the former chairman for Mensa International’s gifted families committee.

Emerson (Leta) was tested in conversation using these technologies:

https://www.Quickchat.ai/Emerson

https://www.Synthesia.io/

EleutherAI was not used in testing, but the author recognises the organisation’s open-sourced contribution to this field with The Pile, GPT-Neo, and GPT-NeoX:

https://www.eleuther.ai/

The Leta conversation videos can be viewed in chronological order at:

References, Further Reading, and How to Cite

Ali, T. (2019). How I Memorized 2 Yellow Pages Phone Books (In Just 24 Days!). https://www.tanselali.com/blog/how-i-memorized-2-yellow-pages-phone-books-in-just-24-days

Binet, A., & Simon, T. (1916). The development of intelligence in children (The Binet-Simon Scale). (E. S. Kite, Trans.). Williams & Wilkins Co.

Brown, T., Mann, B., Ryder, N., Subbiah, M., Kaplan, J., Dhariwal, P., Neelakantan, A., Shyam, P., Sastry, G., Askell, A., Agarwal, S., Herbert-Voss, A., Krueger, G., Henighan, T., Child, R., Ramesh, A., Ziegler, D., Wu, J., Winter, C., Hesse, C., Chen, M., Sigler, E., Litwin, M., Gray, S., Chess, B., Clark, J., Berner, C., McCandlish, S., Radford, A., Sutskever, I. and Amodei, D. (2020). Language Models are Few-Shot Learners. arXiv.org. https://arxiv.org/abs/2005.14165

Dahn, J. (2019). Moonshot (admission projects). Ad Astra School. https://lifearchitect.ai/ad-astra/

Gao, L., Biderman, S., Black, S., Golding, L., Hoppe, T., Foster, C., Phang, J., He, H., Thite, A., Nabeshima, N., Presser, S., Leahy, C. (2020). The pile: an 800gb dataset of diverse text for language modeling. arXiv.org. https://arxiv.org/pdf/2101.00027.pdf

GE. (2016). Decoding Genius [podcast]. MADE. https://lifearchitect.ai/decoding-genius/

Google Support. (2021). Refine web searches. https://support.google.com/websearch/answer/2466433?hl=en

Gross, M.U.M. (2003). Exceptionally Gifted Children (2nd ed.). Routledge. https://doi.org/10.4324/9780203561553

Hollingworth, L. S. (1942). Children above 180 IQ Stanford-Binet: Origin and Development. New York: World Books. https://lifearchitect.ai/180

Kristoffersen, K. B. (2017). Common Crawled web corpora: Constructing corpora from large amounts of web data. https://www.duo.uio.no/bitstream/handle/10852/57836/Kristoffersen_MSc2.pdf

Langston, J. (2020). Microsoft announces new supercomputer, lays out vision for future AI work. Microsoft. https://blogs.microsoft.com/openai-azure-supercomputer/

Nagel, S. (2021). Host- and Domain-Level Web Graphs October, November/December 2020 and January 2021. https://commoncrawl.org/2021/02/host-and-domain-level-web-graphs-oct-nov-jan-2020-2021/

Radford, J. Wu, R. Child, D. Luan, D. Amodei, and I. Sutskever. (2019). Language models are unsupervised multitask learners. OpenAI blog, 1(8):9, https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf

Reddit. (2020). Introducing Reddit’s New Offering for Advertisers: Trending Takeover. https://redditblog.com/2020/03/09/introducing-reddits-new-offering-for-advertisers-trending-takeover/

Stangor, C. and Walinga, J. (2014). Introduction to Psychology – 1st Canadian Edition. Victoria, B.C.: BCcampus. https://opentextbc.ca/introductiontopsychology/

Terman, L. M., & Oden, M. H. (1959). Genetic studies of genius. Vol. 5. The gifted group at mid-life. Stanford University. https://archive.org/details/giftedgroupatmid011505mbp/page/n21/mode/2up

Thompson, A. D. (2019). 98, 99… finding the right classroom for your child. Life Architect. https://lifearchitect.ai/98-99/

Thompson, A. D. (2020a). Connected: Intuition and Resonance in Smart People. Life Architect. https://lifearchitect.ai/connected/

Thompson, A. D. (2020b). The New Irrelevance of Intelligence. https://lifearchitect.ai/irrelevance-of-intelligence/

Thompson, A. D. (2021a). Five minutes with Leta, a GPT-3 AI – Episode 1 (Five things, Art, Seeing, Round). https://youtu.be/5DBXZRZEBGM

Thompson, A. D. (2021b). Five minutes with Leta, a GPT-3 AI – Episode 2 (Pink Floyd, Dreams, Butterflies). https://youtu.be/5noTLnnvNc0

Thompson, A. D. (2021c). Five minutes with Leta, a GPT-3 AI – Episode 3 (comparing AIs, Hitchhiker’s, Limerick, Swearing!). https://youtu.be/iqcpQoktxwE

Thompson, A. D. (2021d). Five minutes with Leta, a GPT-3 AI – Episode 4 (Stanford-Binet IQ test, Elon Musk’s entry questions). https://youtu.be/BDTm9lrx8Uw

Thompson, A. D. (2021e). Five minutes with Leta, a GPT-3 AI – Episode 5 (photos, prompts, simple crisis management scenarios). https://youtu.be/DcD-FGOFBAw

Thompson, A. D. (2021f). The New Irrelevance of Intelligence [presentation]. Proceedings of the 2021 World Gifted Conference (virtual). In-press, to be made available in August 2021. https://youtu.be/mzmeLnRlj1w

To cite this page: Thompson, A. D. (2021). Integrated AI: The rising tide lifting all boats (GPT-3). Retrieved from: LifeArchitect.com.au/AI

Get The Memo

by Dr Alan D. Thompson · Be inside the lightning-fast AI revolution.Informs research at Apple, Google, Microsoft · Bestseller in 147 countries.

Artificial intelligence that matters, as it happens, in plain English.

Get The Memo.

Alan D. Thompson is a world expert in artificial intelligence, advising everyone from Apple to the US Government on integrated AI. Throughout Mensa International’s history, both Isaac Asimov and Alan held leadership roles, each exploring the frontier between human and artificial minds. His landmark analysis of post-2020 AI—from his widely-cited Models Table to his regular intelligence briefing The Memo—has shaped how governments and Fortune 500s approach artificial intelligence. With popular tools like the Declaration on AI Consciousness, and the ASI checklist, Alan continues to illuminate humanity’s AI evolution. Technical highlights.

Alan D. Thompson is a world expert in artificial intelligence, advising everyone from Apple to the US Government on integrated AI. Throughout Mensa International’s history, both Isaac Asimov and Alan held leadership roles, each exploring the frontier between human and artificial minds. His landmark analysis of post-2020 AI—from his widely-cited Models Table to his regular intelligence briefing The Memo—has shaped how governments and Fortune 500s approach artificial intelligence. With popular tools like the Declaration on AI Consciousness, and the ASI checklist, Alan continues to illuminate humanity’s AI evolution. Technical highlights.This page last updated: 18/Jul/2022. https://lifearchitect.ai/rising-tide-lifting-all-boats/↑