The Memo.

Alan D. Thompson

November 2022 (updates March 2023, November 2025, April 2026)

Update 22/Apr/2026:

Google shows off 1,302 real-world gen AI use cases from the world’s leading organizations.

Update 5/Nov/2025:

OpenAI announced one million enterprise customers for the GPT-5 era.

Update 20/Mar/2023:

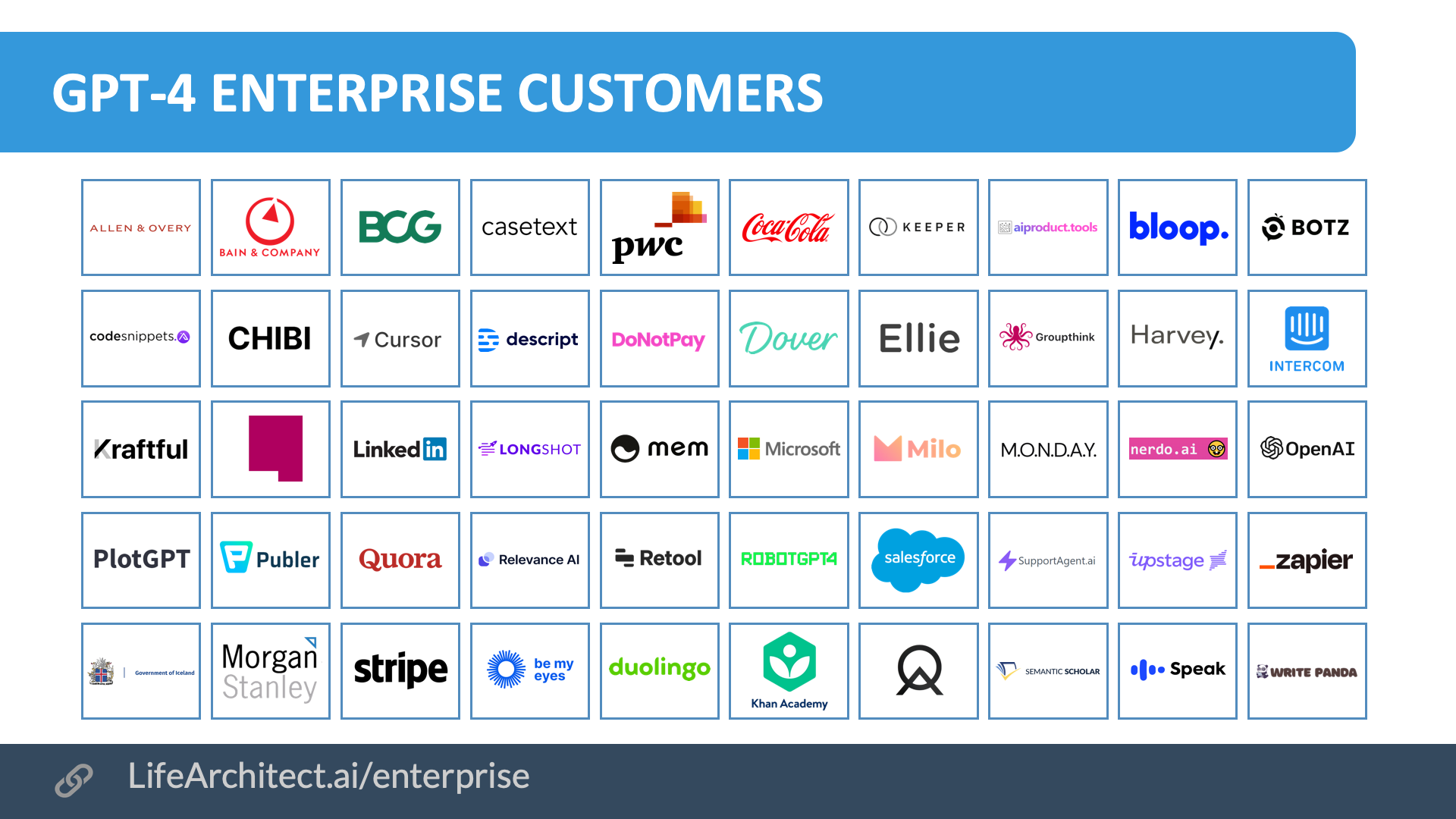

The first 50 GPT-4 enterprise customers are detailed in the sheet below:

GPT-4 enterprise customers: View the full data (Google sheets)

Video

Original article: GPT-3 in the enterprise

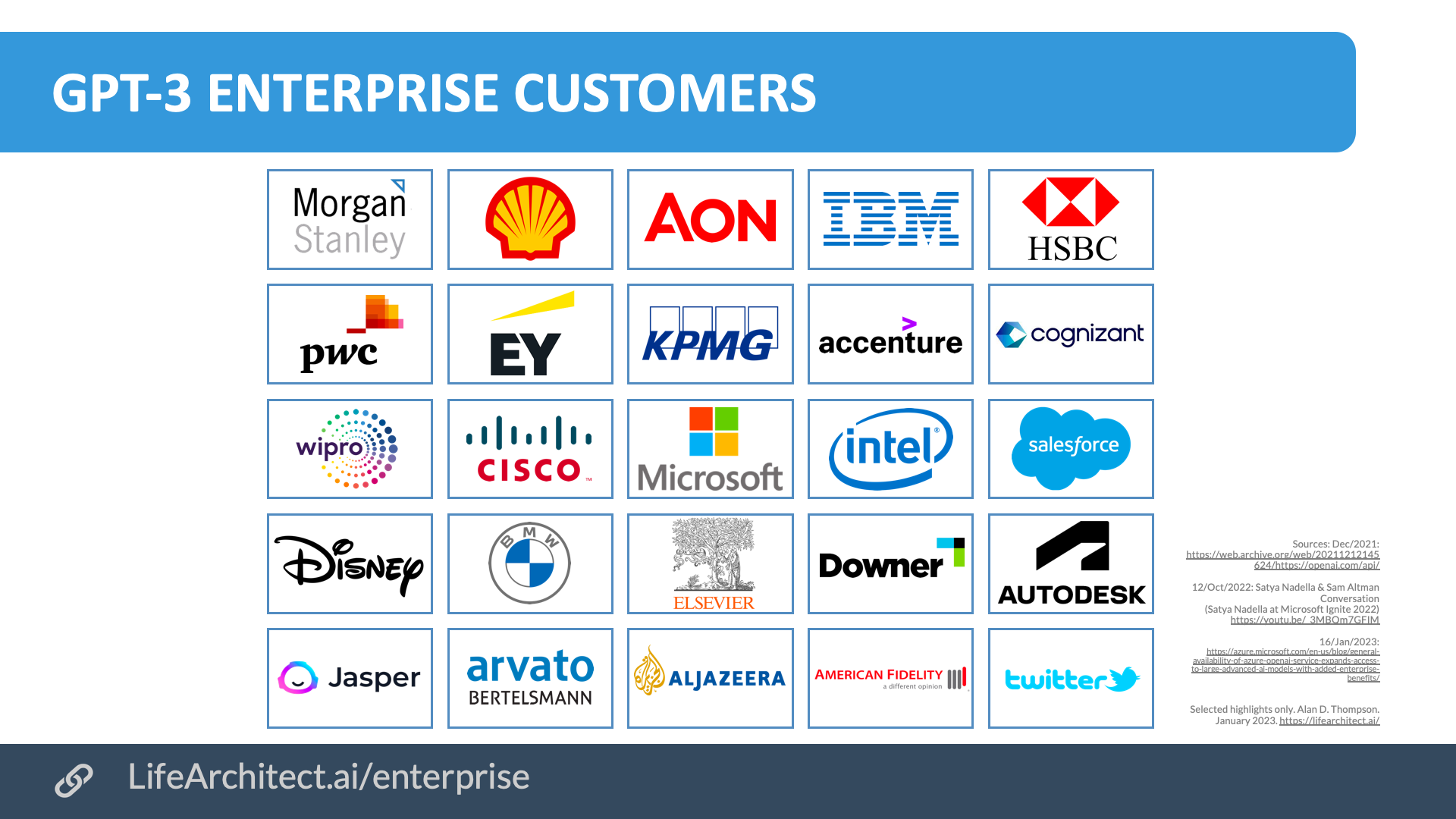

In the second half of 2022, large language models have been applied to all kinds of business processes, resulting in enormous increases in enterprise value. Nearly two years after confirming that GPT-3 is generating the equivalent of one new book per second, and an entire US public library per day (80,000 books), a list of GPT-3 and Codex clients was announced by Microsoft’s Satya Nadella and OpenAI’s Sam Altman in October 2022:

Accenture, AI Busters, Alert Innovation, American Fidelity, Aon, Arvato Bertelsmann, Autodesk, Avanade, BMW, Carmax, Cipio.ai, Clevertar, Cognizant, Databook, Downer, EY, Farmlands Cooperative, Genie AI, Elsevier Health, HSBC, IFAD, Inpris, Inward, Kept, Klaviyo, Morgan Stanley, National Nederlanden, PwC, Shell, Snelstart, Sogeti, Soul Machines, Strabag, Trelent, Wipro, WordLift, Zammo.ai

In a recent keynote to around 4,000 developers representing companies like Google AI and Microsoft, I noted just how deeply AI has penetrated the corporate world. After demonstrating some of the latest applications of GPT-3 (a Google Sheets function, an English to SQL converter, an instant story book generator), I discussed the current state of AI in the enterprise.

I continue to work with some of the biggest Fortune 500s in the world. I’ve [been involved with large language models including GPT-3] being implemented for internal applications. Also [I’ve been providing boards, management, and staff with] internal training, so people understand what’s going on across industries. This quarter, for me it’s been: health, retail, engineering, fashion, pharmaceuticals. I wonder if there are any Fortune 500s that have not played around with it. Even governments have been playing around with it. OpenAI has admitted that these clients have already played with the GPT-3 model internally; I’ve put Microsoft in there because Microsoft invested the one billion dollars that allowed GPT-3 to have access to 10,000 GPUs and 285,000 CPU cores during its training.

— Dr Alan D. Thompson, Devoxx keynote, Brussels (Oct/2022)

My experience with short-term consulting and longer-term advisory on AI for many of the world’s largest companies was articulated by OpenAI’s CEO recently:

…a number of Fortune 500 companies are now using a combination of our Embeddings and Completions endpoint together to drive powerful search features that significantly improve current processes. Compliance teams can use these capabilities to search complicated external standards documentation, and then compare them to their own internal policies. And if they find any gaps, they can even use these capabilities to suggest new language.

This is the type of application that cuts time spent for manually comparing documents from many weeks down to hours. Customers are also using that combination of Embeddings and Completions to help employees quickly find and synthesize answers from across a massive internal knowledge base.

— Sam Altman, Microsoft Ignite (Oct/2022)

Microsoft issued some brief case studies in January 2023:

“Al Jazeera Digital is constantly exploring new ways to use technology to support our journalism and better serve our audience. Azure OpenAI Service has the potential to enhance our content production in several ways, including summarization and translation, selection of topics, AI tagging, content extraction, and style guide rule application. We are excited to see this service go to general availability so it can help us further contextualize our reporting by conveying the opinion and the other opinion.”—Jason McCartney, Vice President of Engineering at Al Jazeera.

“KPMG is using Azure OpenAI Service to help companies realize significant efficiencies in their Tax ESG (Environmental, Social, and Governance) initiatives. Companies are moving to make their total tax contributions publicly available. With much of these tax payments buried in IT systems outside of finance, massive data volumes, and incomplete data attributes, Azure OpenAI Service finds the data relationships to predict tax payments and tax type—making it much easier to validate accuracy and categorize payments by country and tax type.”—Brett Weaver, Partner, Tax ESG Leader at KPMG.

— Case studies via Microsoft (16/Jan/2023)

Many enterprise clients leverage existing large language models—including, but not limited to GPT-3 and GPT-3-based platforms like Quickchat.ai—to rapidly prototype AI applications that can achieve spectacular results. Some selected use cases include:

- Internal process automation.

- Product marketing material development.

- Summarization of meeting transcripts.

- Brainstorming and idea generation (see Mattel for their use of OpenAI’s DALL∙E 2 in generating new toy ideas).

- External/customer-facing chatbots.

- Internal knowledge bases and chatbots.

OpenAI expanded on one of these case studies in October 2022, mentioning the $100B+ multinational investment management firm Morgan Stanley.

For example, Morgan Stanley is building an AI assistant that helps their tens of thousands of wealth managers better support their clients. The assistant combines search and content creation, so that wealth managers can quickly find and tailor the right information for every client at any moment.

— Sam Altman, Microsoft Ignite (Oct/2022)

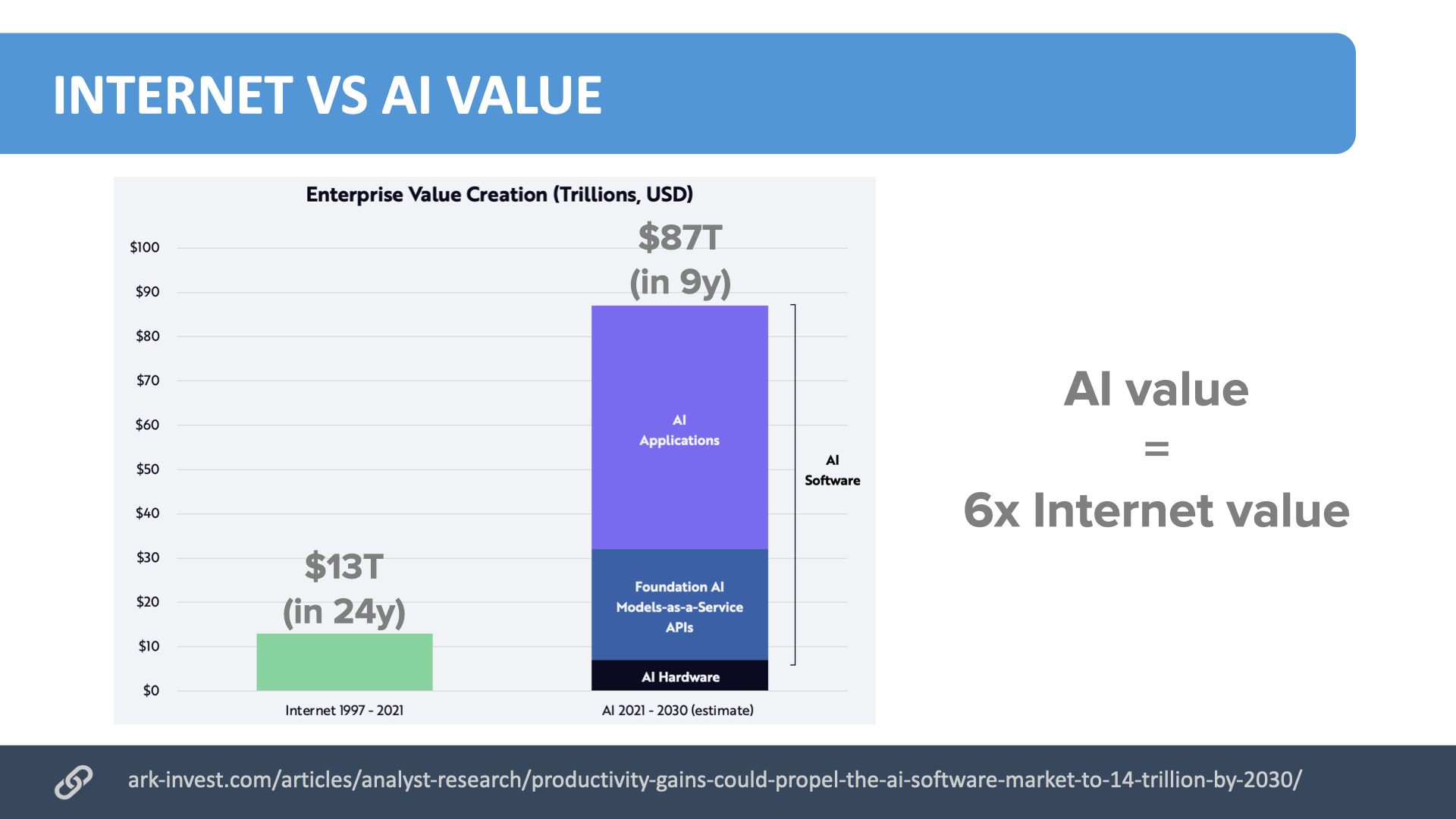

As more and more businesses around the world leverage these AI models for enterprise value creation, ARK Invest expects that the continued implementation of these models to business processes will see $87T in enterprise value creation over the next few years to 2030.

That is around $25T from the model APIs (like OpenAI’s GPT-3 and EleutherAI’s GPT-NeoX-20B), and then more than double that (around $55T value) unlocked by the new AI apps being developed. While the revenue from these applications is presently skewed towards a few big players like Jasper AI and Copilot, there are hundreds of new applications being released, and as of November 2022, the approvals process for GPT-3 apps has been quashed, simplifying the release process for new applications even further.

As many of the blue chips have already been working with these AI models for more than two years, smaller businesses (and slower corporations!) will now be playing catch up. And if AI is said to be moving at lightning speed, its business adoption may be more urgent than ever. As one of my colleagues at Superlegal.ai notes: “AI will not replace lawyers, but lawyers who use AI will certainly replace those who don’t.”

Are you ready?

Video

References, Further Reading, and How to Cite

Thompson, A. D. (2022). AI and GPT-3 in the enterprise. https://LifeArchitect.ai/enterprise

Further reading

“IBM, Twitter, Salesforce, Cisco, Intel, Disney”

Dec/2021: https://web.archive.org/web/20211212145624/https://openai.com/api/

“Accenture, AI Busters , Alert Innovation, American Fidelity, Aon, Arvato Bertelsmann,

Autodesk, Avanade, BMW, Carmax, Cipio.ai, Clevertar, Cognizant, Databook, Downer, EY, Farmlands Cooperative, Genie AI, Elsevier Health, HSBC, IFAD, Inpris, Inward, Kept, Klaviyo, Morgan Stanley, National Nederlanden, PwC, Shell, Snelstart, Sogeti, Soul Machines, Strabag, Trelent, Wipro, WordLift, Zammo.ai”

12/Oct/2022: Satya Nadella & Sam Altman Conversation (Satya Nadella at Microsoft Ignite 2022) https://youtu.be/_3MBQm7GFIM

Get The Memo

by Dr Alan D. Thompson · Be inside the lightning-fast AI revolution.Informs research at Apple, Google, Microsoft · Bestseller in 147 countries.

Artificial intelligence that matters, as it happens, in plain English.

Get The Memo.

Alan D. Thompson is a world expert in artificial intelligence, advising everyone from Apple to the US Government on integrated AI. Throughout Mensa International’s history, both Isaac Asimov and Alan held leadership roles, each exploring the frontier between human and artificial minds. His landmark analysis of post-2020 AI—from his widely-cited Models Table to his regular intelligence briefing The Memo—has shaped how governments and Fortune 500s approach artificial intelligence. With popular tools like the Declaration on AI Consciousness, and the ASI checklist, Alan continues to illuminate humanity’s AI evolution. Technical highlights.

Alan D. Thompson is a world expert in artificial intelligence, advising everyone from Apple to the US Government on integrated AI. Throughout Mensa International’s history, both Isaac Asimov and Alan held leadership roles, each exploring the frontier between human and artificial minds. His landmark analysis of post-2020 AI—from his widely-cited Models Table to his regular intelligence briefing The Memo—has shaped how governments and Fortune 500s approach artificial intelligence. With popular tools like the Declaration on AI Consciousness, and the ASI checklist, Alan continues to illuminate humanity’s AI evolution. Technical highlights.This page last updated: 1/May/2026. https://lifearchitect.ai/enterprise/↑